9 Supplement - Behavioural economics: Selected further concepts

Note for 2020-2021: As Exeter has retained the Msc module in Behavioural Economics, this content will not be a core part of the module.

Some simple discussion of key concepts from behavioral economics and from my own research from previous lectures (available within Exeter only)

Includes discussion of:

Allais Paradox at 12:51

A ‘loss vs gain’ framing scenario (Kahneman and Tversky) at about 22:30

loss aversion and prospect theory, applied to both of the above scenariae at about 25:00

Limited willpower and ‘hyperbolic discounting’ at about 28:15

Other-regarding preferences in games at about 30:00

My research on ‘barriers to effective giving’ at about 48:00

Thou shalt be carefully sceptical; critique do not just criticise; Consider the assumptions made; Consider the evidence

9.1 Neoclassical versus Behavioural economics (simple discussion)

(Neo)classical economics assumes:

Firms maximise profit

Individuals/households have a consistent utility function which they act ‘rationally’ to maximise

Furthermore, it is mainly focused on own consumption, ignores ‘cognitive costs’, ignores ‘malleable and changing preferences’

they are not acting optimally to maximize their welfare given these preferences or

their preferences are not internally consistent (e.g., not-transitive), are not fully self-interested (altruism),

or are otherwise difficult to model (e.g., depending on the manner they make choices and not only the outcomes.

Classical economics assumes

- Optimization of a consistent ‘normal’ utility function subject to known constraints

- Only own consumption matters?

- No cost to gather information or make calculations?

(Perhaps) expected utility preferences over gambles/investments, and probabilities ar accurately known.

Geometric discounting of future payoffs with a single discount rate … or at least a ‘consistent’ discount rate.

What do I mean by ‘consistent’ discount rate"…

The discount rate between two periods or two calendar years is allowed to vary in the classical model.

However, it must be consistent in the following sense: the discount rate must depend on which periods the consumption is being traded off between.

It should not depend on which period I am in when I am making the decision. More on this under ‘hyperbolic discounting’, time-inconsistency, and impatience, below.

E.g., if \(\delta=0.1\) in each period then next period’s payoff of 100 is worth as much as \((1-0.1) \times 100 = 90\) today.

A payoff of 100 in two periods is worth as much as \((1-0.1) \times 100 = 90\) tomorrow, or as much as \((1-0.1) \times (1-0.1)\times 100 = 81\) today.

Strategic behaviour in interactions: Common knowledge of rationality, Nash equilibrium?

We can find behaviour and outcomes that seem to contradict the above assumptions (see, e.g., DellaVigna, 2009). This ‘non-classical’ behaviour is (arguably) common, significant, and follows predictable patterns. Should we then stop learning classical economics?

9.1.1 Classical economists are not naïve

They know preferences change, people make mistakes, etc.

Justifications include:

- ‘Most people, most of the time,’ and many mistakes will be ‘fixed’ by the market.

- Strong predictive power

- ‘Normative’: how we ‘should’ behave to get the best outcomes

- A good starting point, framework for insight, benchmark

9.1.2 Behavioural Economics

Relaxes some of the assumptions above, usually one at a time

Does not typically ‘reject rationality’ (instead we hear of ‘bounded rationality’)

Most “behavioral models” involve some sort of optimization (and often equilibrium)! There are ‘pure behavioral theorists’ who try to find parsimonious models to explain deviations.

Most influential authors: Kahneman and Tversky

9.1.3 Modern consensus/entente

Both classical and behavioural economics are useful

- Different models and techniques (for different spheres?)

A ‘compromise’ position? … It is worth testing for, and admitting the possibility of ‘systematic violations’ of the classical assumptions.

Classical economics is usually more relevant for firm behavior But Classical may (or may not) predict well for aggregates and for firm behaviour. Behavioural models may predict better for individual behaviour in isolation

… but behavioural admits many possibilities and heterogeneous behavior, so it makes broad predictions difficult

Behavioural insights are (probably) useful in making policy (and in marketing).

Evidence from the lab and small scale field settings may be informative, but some ‘behavioural anomalies’ may be inconsequential in larger markets in equilibrium}

9.2 Limits to Human Decision Making: An Overview

Ww might classify four general ways people diverge from classical assumptions

Limited cognitive ability – difficult and costly to make complex decisions

Limited willpower – self control problems

Limited self interest – care about others (fairness, altruism), issues beyond income/consumption

Inconsistent, changing, and ‘non-outcome-based’ preferences *

Under (e.g.) Prospect Theory people may be acting to maximise their true preferences,

but their true preferences may change depending on reference points etc.}

9.2.1 Limited cognitive ability

- Complexity of problems

- Optimising calculations take time; this may itself be a cost

- A ‘simplifying decision-making rule’ (rule-of-thumb) may be better if it saves these costs

- Relevant both to individual decision-making (consumption, investment) and strategic choices

- Game theory: recall ‘iterated strict dominance’ and ‘backwards induction’

- Optimising calculations take time; this may itself be a cost

- People (seem to) systematically misunderstand probabilities (and other maths concepts)

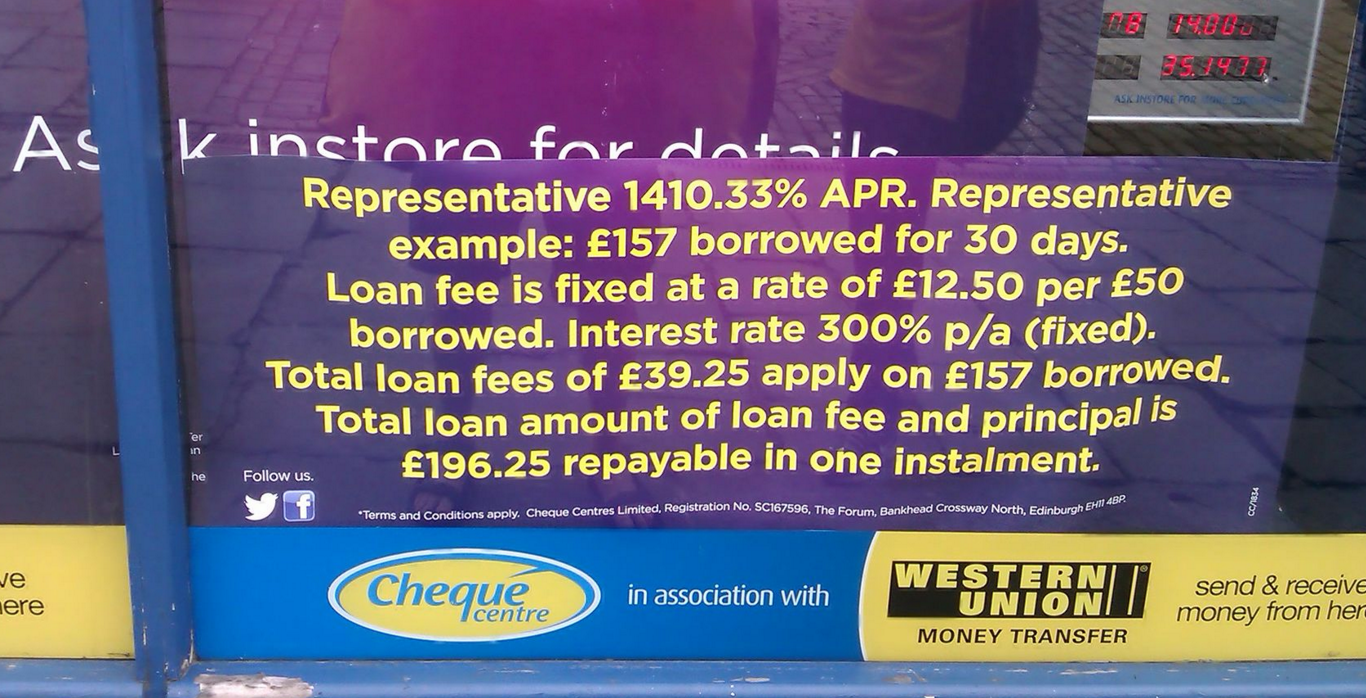

Application: lack of financial literacy

- Consumers who underestimated interest rates in quizzes held the highest interest loans in real life

- Particularly when government ‘truth in lending laws’ were lax

Evidence from Stango and Zinman, 2011

A ‘quiz…’

- Suppose you had £100 in a savings account and the interest rate was 2% per year. After 5 years, how much do you think you would have in the account if you left the money to grow:

A. More than £102

B. Exactly £102

- Imagine that the interest rate on your savings account was 1 percent per year and inflation was 2 percent per year. After 1 year, would you be able to buy:

A. more than

B. exactly the same as, or

C. less than today

- Do you think that the following statement is true or false? ‘Buying a single company stock usually provides a safer return than a stock mutual fund.’

(True/False)

A

C

false

Around the world, across many studies, less than half answer all three of the above questions correctly.

Lusardi and Mitchell, 2013; In Germany and Switzerland, just over 50% get all three right. In most other surveyed countries, the numbers are far lower. E.g., in the USA 65% get the interest rate question right, 64% the inflation question, and 52% the diversification question.

9.3 Limited willpower and ‘hyperbolic discounting’

Notes:

In the 1960’s-70’s Stanford experiment. Children could have 1 marshmallow now or 2 in 15 minutes if they could resist temptation.

There is some evidence that this correlates to later life outcomes (test scores, degrees, BMI, etc).

Strong results; interpretation debated.

The strongest results have failed to replicate. See a popular discussion here.

There is also some criticism of the results’ interpretation. The kid’s behavior may not have only been driven by impatience but also on how much they trusted the experimenters. Those kids brought up in low-trust environments may have trusted the experimenters less to ‘come through’ with the second marshmallow. They also may have tended to have other obstacles to their academic and professional success.

Monday-David wants Tuesday-David to give up 1 marshmallow for 2 marshmallows on Wednesday.

But Monday-David unwilling to give up 1 marshmallow for 2 marshmallows on Tuesday.

Similarly, Tuesday-David won’t give up 1 marshmallow for 2 on Wednesday

- Inconsistency: Monday-David thinks Tuesday-David is acting sub-optimally!

- Is David acting in his own interest? Can we even define his ‘true utility’?

- Hyperbolic discounting (simple version)

- Steep drop in ‘weight \(w\)’ on payoffs earned after the current period

- But constant weight (or constant discounting) for payoffs multiple periods in the future

- This leads to inconsistencies between ‘planned’ and chosen behaviour

Compare two ‘streams of payoffs’

Work: -10 today, +2 for the next 6 days

Lazy: -5 today, +1 for next 6 days

Examples: Should you…?

study very hard on Sunday to be prepared for the whole week,

Get a good workout to feel energized the whole week

Clean your flat on Sunday …. etc.

Grasshopper weights payoffs today, tomorrow, and every day the same: \(w=1\)

- Monday, Grasshopper compares Work to Lazy, and chooses Work.

- payoff(Work) \(= -10 + (2 \times 6) = 2\)

- payoff(Lazy) \(= -5 + (1 \times 6) = 1\)

Ant always weights payoffs at 1 today and \(w=1/2\) on any future day

- Monday, Ant chooses Lazy:

- payoff(Work) \(= -10 +(2 \times 6)/2 = -4\)

- payoff(Lazy) \(= -5 + (1 \times 6)/2 = -2\)

If Grasshopper were ‘choosing for Monday on Sunday’, he would choose Work \(\rightarrow\) Consistent

But if Ant were ‘choosing for Monday on Sunday’, he would choose Work \(\rightarrow\) Inconsistent

- On Sunday he considers

- payoff(Work Monday) \(= (-10 + 2 \times 6)/2 = 1\)

- payoff(Lazy Monday) \(= (-5 + 1 \times 6)/2 = 1/2\)

- But as we saw, on Monday he chooses to be lazy

- Would he ‘benefit from pre-committing?’

9.4 Limited self-interest … e.g, altruism and fairness

‘Other-regarding preferences’: My utility may be impacted by…

- Other people’s utility, or their consumption of particular ‘merit’ goods

E.g., I suffer if I know my neighbour suffers, or her child eats too little \(\rightarrow\) this implies ‘aid to the poor’ may become a public good; thus it may be massively under-provided in a voluntary setting

- My perceived ‘impact’ on outcomes

- If I donate to charity, I feel better

- If my contribution improves people’s lives, protects the environment (relative to my having done nothing)

- \(\rightarrow\) People may make choices reducing their own consumption, to increase other’s consumption

- Charitable giving is widespread; accounts for about 1% of UK GDP

- ‘Donations’ to family members much higher

The utilities, and decisions, of family members are highly connected. There is an ongoing debate and research programme considering when to model a household’s decisions as ‘unitary’ and when to consider the individual preferences of the household members.

‘Other-regarding preferences’: My utility may be impacted by…

- My reputation and how others perceive me

\(\rightarrow\) Thus people may donate and cooperate more when they are observed by others.

- How ‘fair’ I believe the outcomes and actions taken are

\(\rightarrow\) People reject significant positive offers in ultimatum games and bargaining

- Anticipating this, people make offers considerably above zero

\(\rightarrow\) People cooperate when they expect others to do so

\(\rightarrow\) people engage in ‘costly punishment’ of others they believe acted unfairly

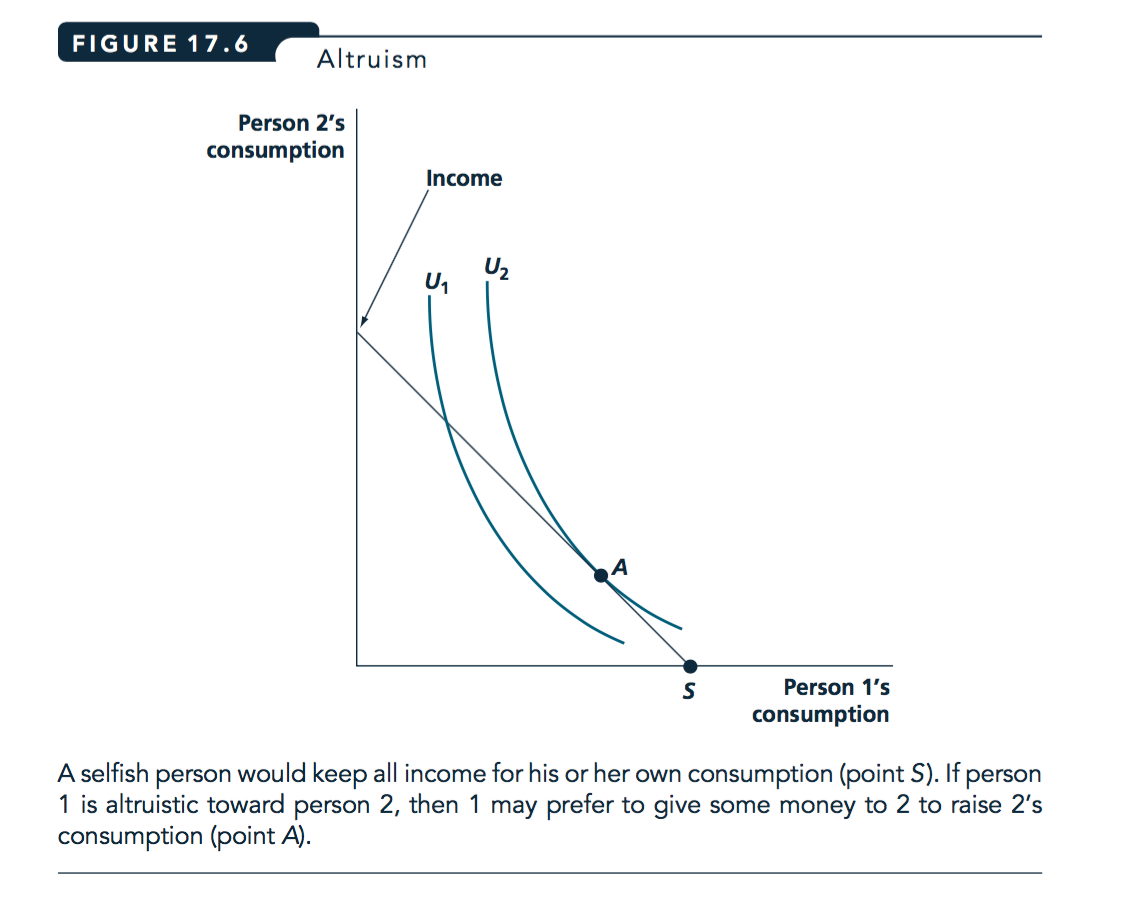

Rational altruism?

We can model some forms of other-regarding preferences using standard tools, like indifference curves and budget constraints:

Source: Nicholson and Snyder, 2012

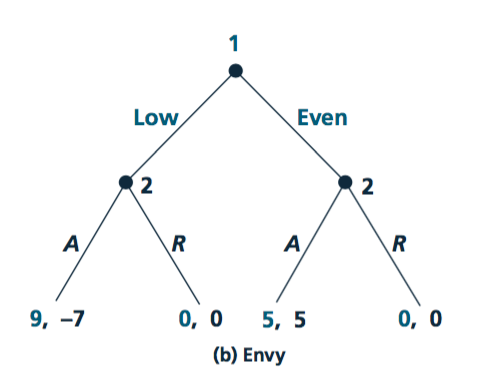

9.4.1 Converting from ‘material payoffs’ to ‘psychological payoffs’

As we have previously noted, the ‘real’ payoffs in a game (or an individual decision) may not be identical to the monetary/material payoffs

(Important to note, this is a distinct issue from ‘diminishing marginal utility’ and risk-aversion.)

Motives like ‘fairness’ may transform monetary payoffs into ‘real’ payoffs in a straightforward way

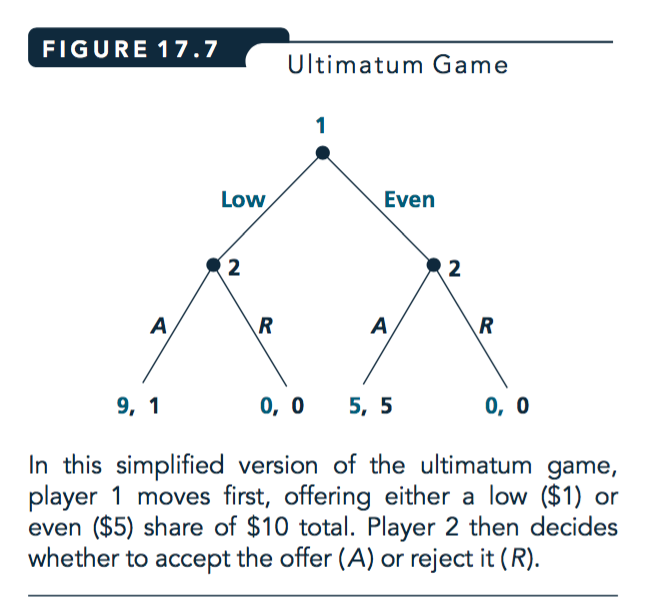

E.g., in the ultimatum game, suppose player 1’s real payoffs are:

\[U_1(Y_1,Y_2) = Y_1 - |Y_1-Y_2|\]

Where \(Y_1\) is the amount 1 earns from the ultimatum game, and \(Y_2\) is 2’s income from this game.

Similarly for player 2:

\[U_2(Y_2,Y_1) = Y_2 - |Y_1-Y_2|\]

Here each player gains 1 unit of utility for each pound they earn, but loses a unit of utility for every pound of difference between the payoffs.

Source: Nicholson and Snyder, 2012

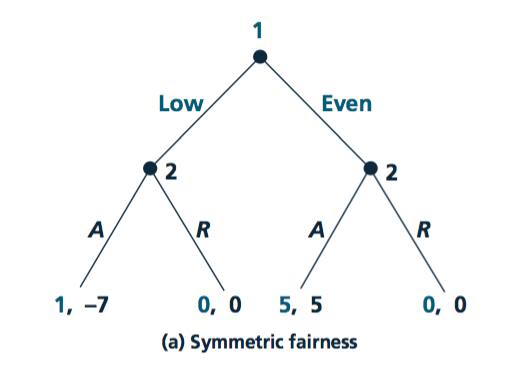

Notes on the above:

Here if earnings are equal, real payoffs are the same as earnings; 0,0 or 5,5

Where earnings are unequal, player-1 gets 9 and player-2 gets 1 from material payoffs.

But because of a difference of 8 in earnings, both players lose 8 in psychological payoffs

so the net real payoffs if player-1 offered 1 and 2 accepted would be 9-8=1 for player-1 and 1-8=-7 for player-2.

Using BWI here we see the subgame perfect NE will be that player-1 offers 5 and player-2 accepts.

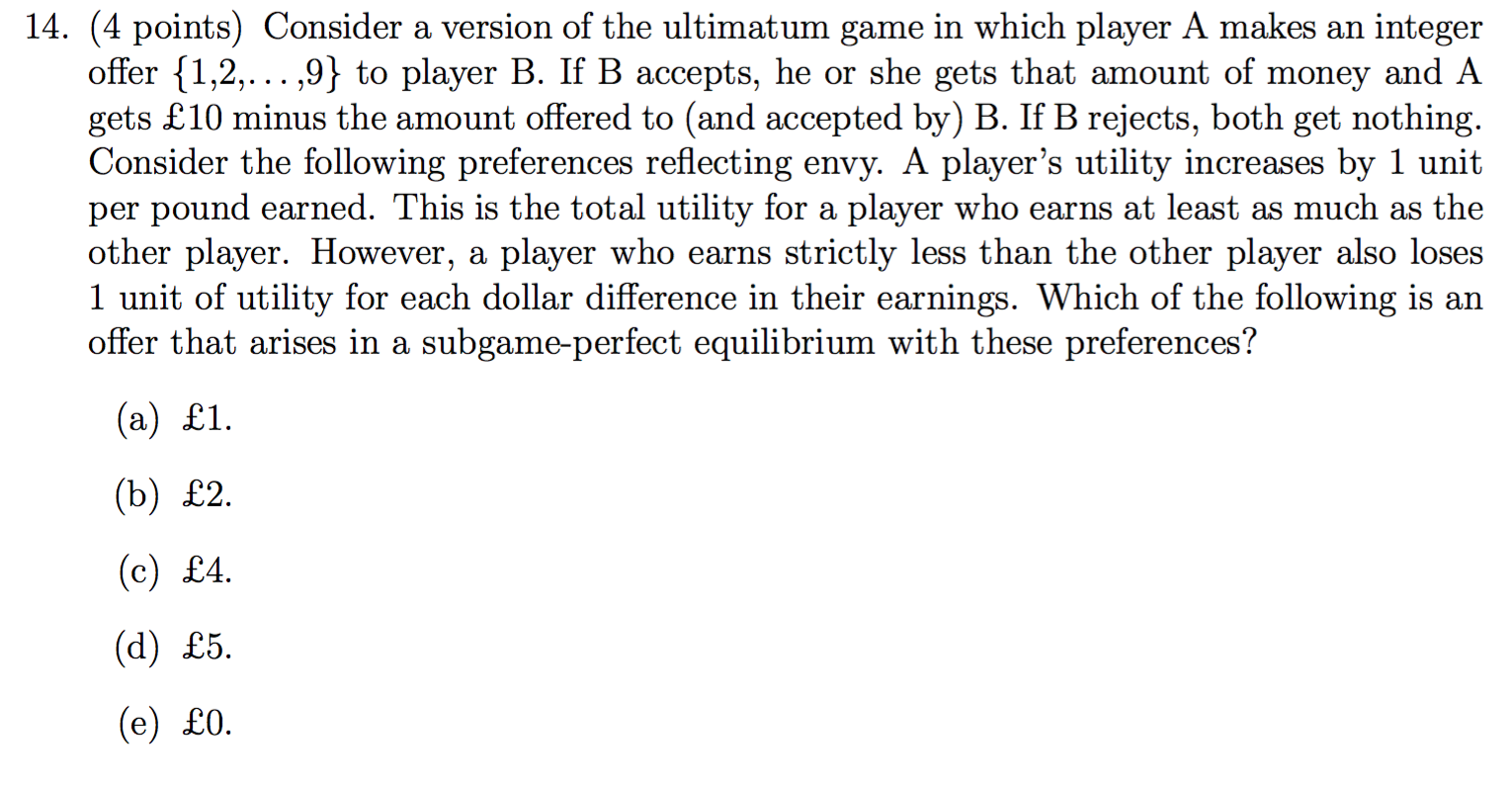

‘Envy payoffs’

Notes and explanation of the above: (unfold)

For the outcomes considered, only player-2’s payoffs are adjusted from the material payoffs;

she gets 1-8 = -7 from accepting an offer of 1; her payoff is 1 but she loses 7 in envy.

Because of this she will not accept such an offer. Knowing this, player-1 will offer 5.

9.4.2 Supplemental (reading): Evidence on the Ultimatum Game

Guth et al. (1982) presented the first experimental test of the ultimatum game.

This game has two players: a Proposer and a Responder

Proposer has a pie of size 1. She must propose a split of the pie between the two players \((1-s, s)\)

The Responder may:

- accept (in which case the split is executed)

- reject (in which case both players get zero

This is a ‘take it or leave it offer’ in bargaining. What do you think he/she will offer? Do you think he/she will accept? Why or why not?

9.4.3 Ultimatum game: theoretical predictions

Allowing ‘non-credible threats’, this game has as many Nash equilibria as there are possible splits of the pie.

In each of them, the Responder’s strategy is to ‘accept only if offered X (or more)’ for some \(X\geq0\), and the Proposer’s strategy is to offer exactly X.

But maybe these equilibria don’t seem like reasonable predictions (why?)

Q: What does SPNE predict (use backwards induction)?

If the split is \((1,0)\), the Responder is indifferent between accepting and rejecting

That still means the Proposer offering \((1,0)\) and the Responder accepting is a NE

The subgame-perfect Nash equilibrium is found by solving the game backwards:

The Proposer (correctly) anticipates the Responder will accept any offer

- Thus she offers zero, which is accepted ‘in equilibrium’

(A ‘near zero’ offer would lead to a similar result, and might seem more intuitive)

Note, that these are basic game theoretic predictions, not Behavioral.

What happens in experiments?

A 50-50 split is the most common offer

Responders tend to reject offers giving them less than 30%, even when this is a lot of money.

Potential explanations for the above

Fairness concerns; monetary payoffs may not represent actual payoffs

Proposers may anticipate this, and thus offer more than the minimum to avoid their offer being rejected. (Also, proposers may themselves have fairness preferences.)

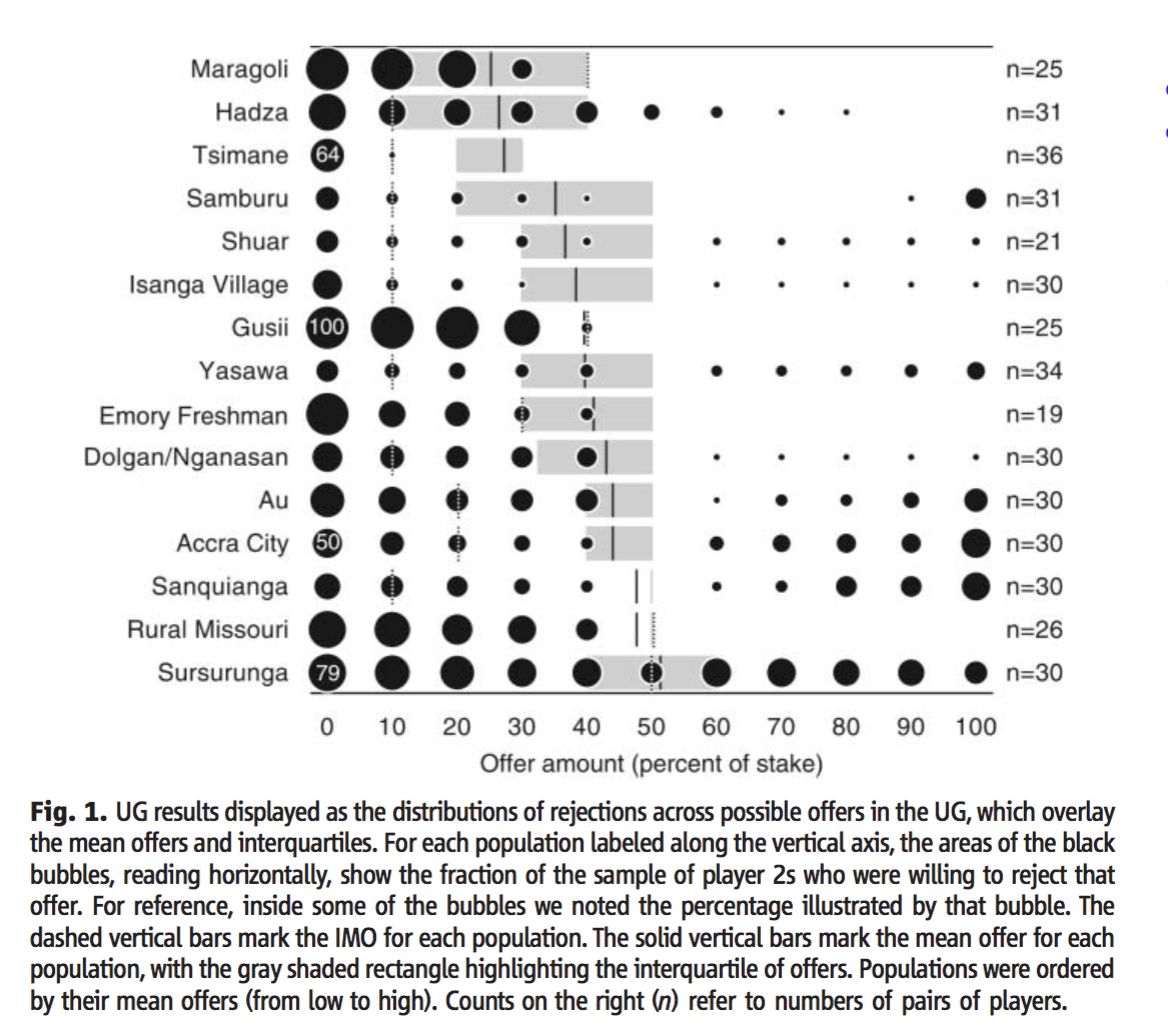

In a variety of cultures and contexts (Henrich et al, 2006):

9.5 Application: Give-if-you-win (EU vs. Prospect theory)

EU over outcomes predicts these choices will be the same.

Here, in choosing conditional choices ‘if I win’ etc., the decision for each state (win or lose) are separate.

- Max \(EU = pr(win)u(win,choice if win) + pr(lose)u(lose,choice if lose)\)

- Choice for ‘if I win’ does not affect payoff ‘if I lose’

Before I know the outcome, I may anticipate what my utility-maximising donation \(g^*\) would be if I win the bonus

Let \(c\) be own consumption, \(Y\) income pre-bonus, \(w\) the bonus amount, and \(g_b\) the committed donation ‘if I win \(w\)’

I choose \(g_b\) to maximize utility \(u(c, g)\) s.t. budget constraint \(c+g \leq Y+w\)

If asked to pre-commit ‘if I win’, I commit to that choice, so \(g_b=g^*\)

After I have won the bonus \(w\), I maximize utility \(u(c, g)\) s.t. budget constraint \(c+g \leq Y+w\)

- Same maximisation as above, so \(g_a=g_b=g^*\)

However, other models predict distinct choices with the Before and After asks

Reputation: If I give to benefit my reputation or self-esteem, unrealised donations may matter, not just donation outcomes

- This may be an incentive to commit more the less likely a donation needs to be paid

- ‘If I win the lottery megabucks, I will give it all away’

Loss aversion: If I am ‘loss averse’ over my own consumption, it may seem ‘cheaper’ to commit before I win the bonus than after

Why? Because my reference point may change. Suppose I’ve £50k income and a potential £50k bonus with 50% probability

Before winning my reference consumption may be the EV, £75k

So a conditional donation to donate £10k if I have a total income+bonus of £100k may not seem like a loss

But after I win the bonus, my new reference consumption may be £100k

So each pound donated might feel like a loss

What do you think happens when you ask people to donate either Before-conditionally or After winning a prize or bonus? Do they commit more ‘before’ than they give ‘after’, or vice versa, or are these equivalent?

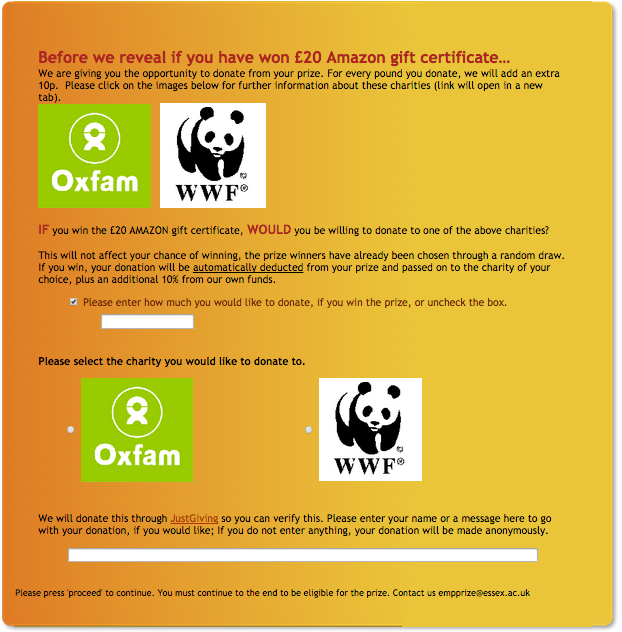

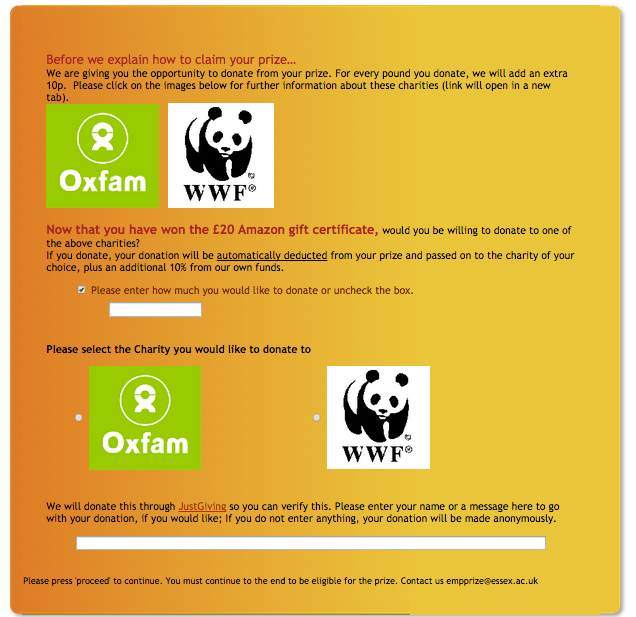

Field experiment

One field experiment: University of Essex undergraduate students

Emails from DAs on behalf of FSS

Subject: Employability promotion – a 1 in 4 chance of winning a £20 prize for doing a short survey.

Text: Please go to [web site] – we have 80 free dinners for two in Colchester to give away, worth £20 each and at least 40 £20 Amazon vouchers!! If you log on, you will have a one in four overall chance of winning one of these prizes!

Details: 2 rounds over 2 academic years. 2 prizes in round 1, Amazon only in round 2. First covering Faculty of Social Science, then all departments. Sign in with email, orthogonal employability treatments.

\(\rightarrow\) 352 valid responses with donation opportunities

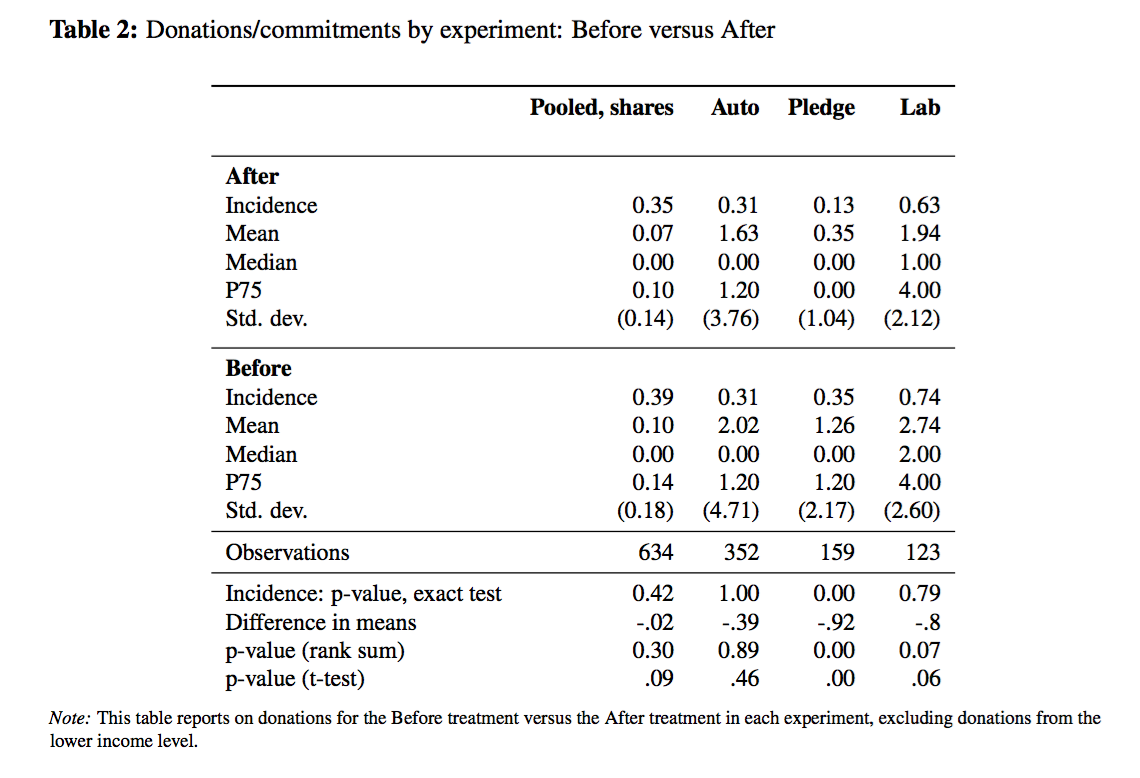

Before charity treatments

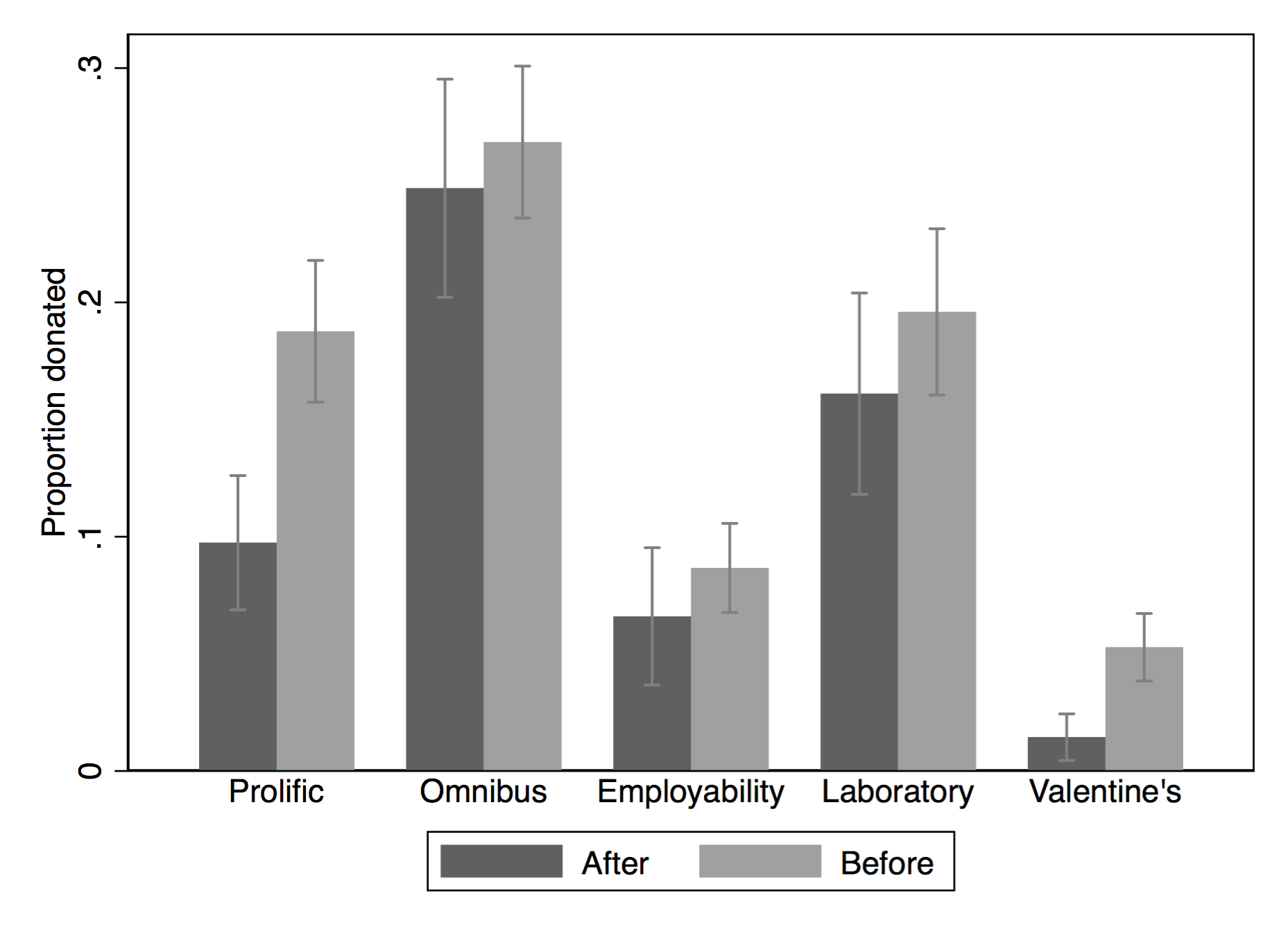

Results: In a variety of experiments, donation incidence and amounts are often higher and never lower in the Before treatment. This is statistically significant in two of five experiments and in the ‘pooled’ data.

see https://davidreinstein.wordpress.com/research-and-publications/ under ‘working papers’

More details of this project at giveifyouwin.org

9.6 Behavioral Economics: further exercises and examples

Below, I provide some revision material and questions focusing on Behavioral economics

- Recap ‘converted ultimatum game’ problems

- Sources of evidence on various departures from classical assumptions

From an earlier final exam

Answer…

- As long as money payoffs are in my favour, I don’t have envy, so maximise these if possible

- So proposer will make smallest offer that responder will accept, presuming this is at or below 5

- Responder accepts as long as Earnings - difference in earnings is at or above 0

Sources of evidence and discussion

I want you to have a decent background on…

- The Allais Paradox: setup, evidence, explanations

- ‘Solving converted games’

9.7 Inconsistent preferences; examples considering decisions under uncertainty (simple treatment)

9.7.1 Allais paradox - what could be the explanation?

… As presented and discussed above, in section @ref(#allais) 3.8

SO WHY do people choose B over A and D over C?

- One theory: People overweight small probabilities

- \(\rightarrow\) Gamble A: the 1% chance of 0 is treated as larger?

We consider this and other possible explanations below, but first we consider one more paradox…

Kahneman and Tversky scenariae

Warning: This is not the standard Allais paradox, it is a different paradox.

KT1. Which would you choose?

You get £1000 upfront.

Gamble $A: You have an 50% chance of winning an additional £$1,000.

Gamble $B: An additional £$500 with certainty.

Write down: which would you choose?

KT1. Which would you choose?

You get £2000 upfront.

Gamble \(C\): You have an 50% chance of losing £1,000.

Gamble \(D\): You lose £500 with certainty.

KT1: You get £1000 upfront.

Gamble \(A\): You have an 50% chance of winning an additional £1,000.

Gamble \(B\): An additional £500 with certainty.

KT1: You get £2000 upfront.

Gamble \(C\): You have an 50% chance of losing £1,000.

Gamble \(D\): Lose £500 with certainty.

KT (hypothetical) experiment: 16% of subjects chose \(A\) over \(B\), and 68% chose \(C\) over \(D\)

- But \(A\) is the same as \(C\) (50/50 chance of £1000 or £2000),

- and \(B\) is the same as \(D\) (certain £1500)!

Seems to depend on how we ‘frame’ these.

9.7.2 Explaining the above paradoxes with prospect theory

- Above choices: cannot be explained by ‘regular’ EU theory

- Mistakes, misunderstanding probabilities?

- Prospect theory, loss-aversion: not mistakes, but maximising something other than EU

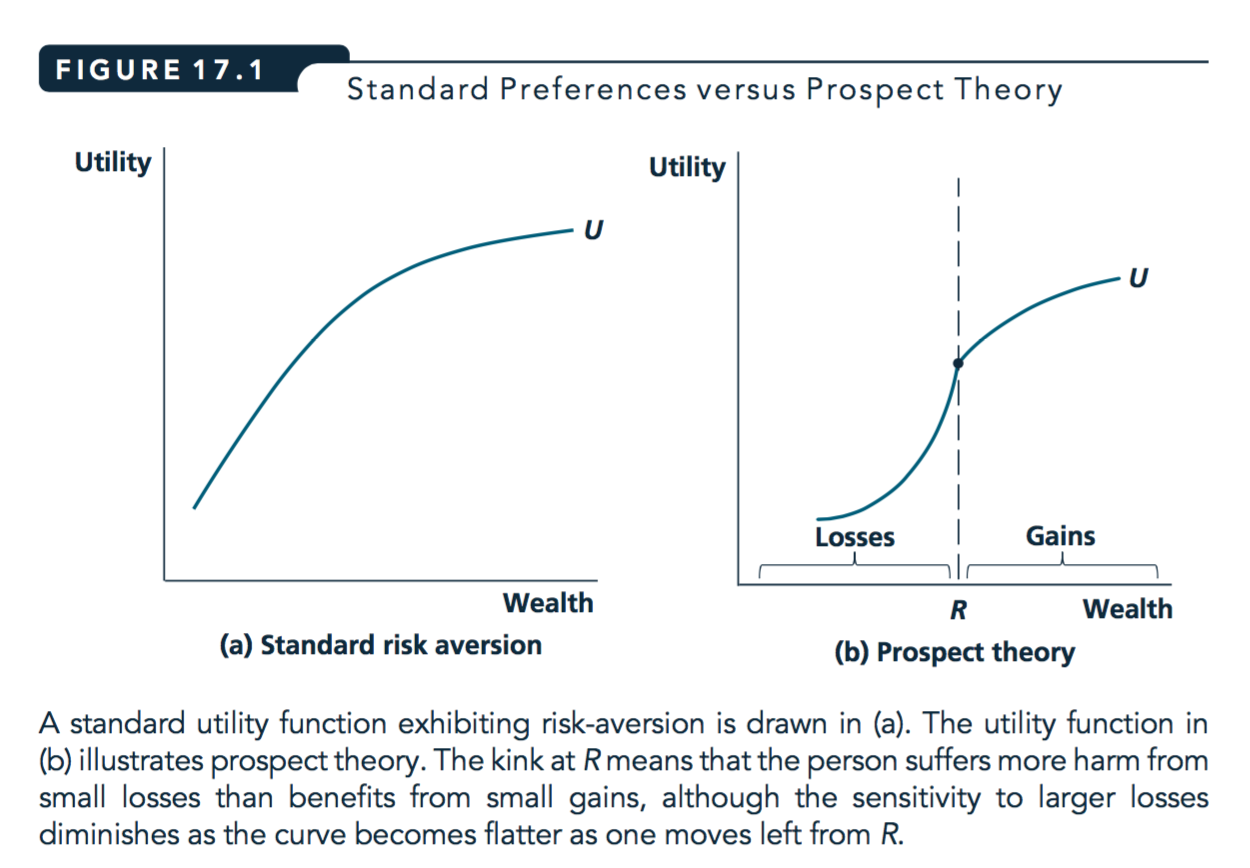

Prospect theory – loss aversion (LA) part

- People don’t think only about outcomes but about ‘gains or losses relative to a reference point’

- Outcomes considered losses are particular painful

- Whether an outcome is a loss depends on the reference point

- which may depend on how a decision is framed

- or on the starting point, or initial expectations

\(\rightarrow\) may make decisions to ‘avoid losses’

that we wouldn’t make if we saw it as ‘increasing gains’

Standard and loss-averse utility

Source: Nicholson and Snyder, 2012

Notes:

What leads to the paradoxes and ‘inconsistent behaviour’?

The fact that the reference point can vary depending on things that are ‘irrelevant’ from an EU perspective

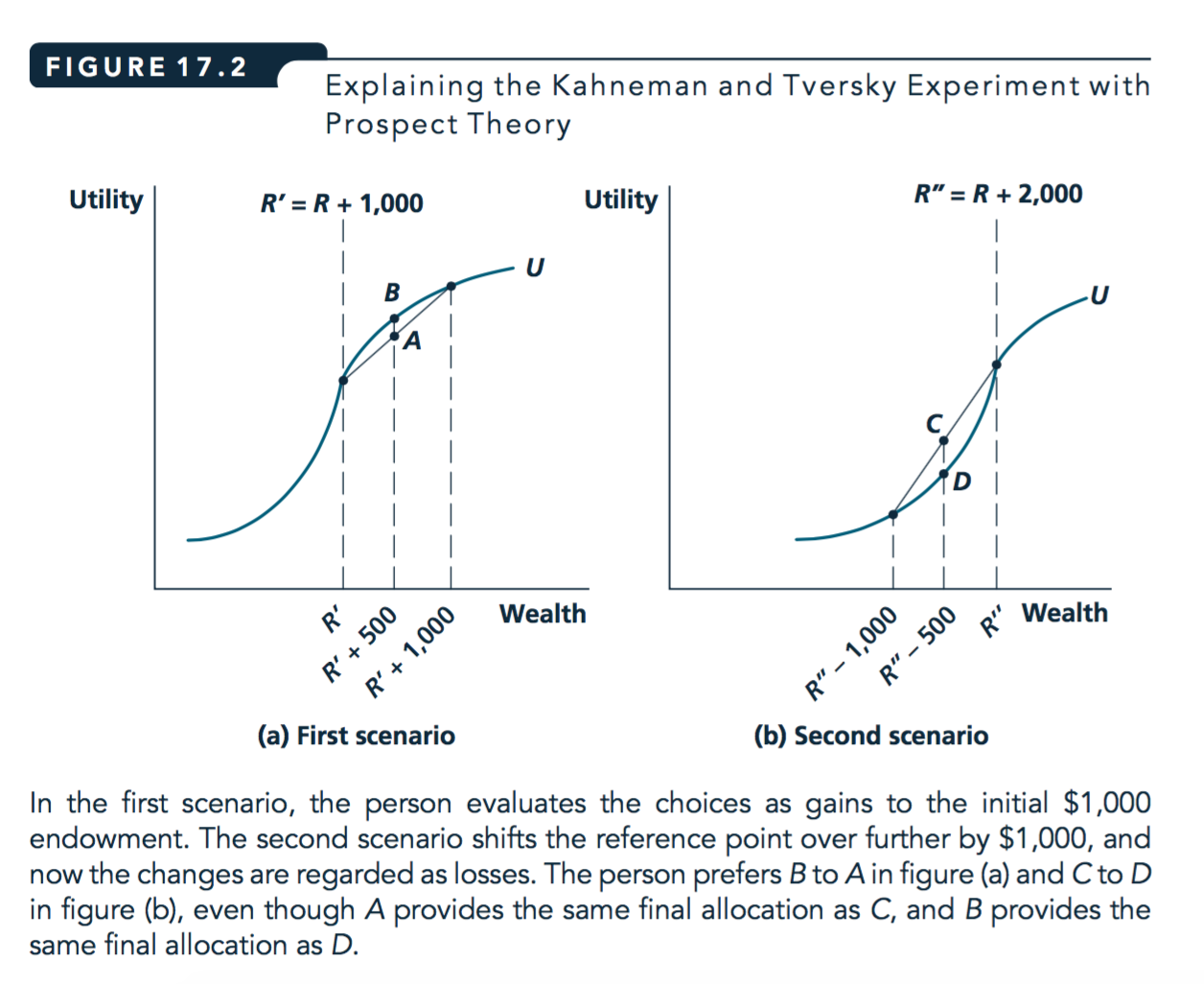

KT experiment with prospect theory (loss aversion)

KT1: £1000 upfront.

- Gamble \(A\): You have an 50% chance of winning an additional £1,000.

- Gamble \(B\): An additional £500 with certainty.

KT2: £2000 upfront.

- Gamble \(C\): You have an 50% chance of losing £1,000.

- Gamble \(D\): You lose £500 with certainty.

- In KT1, your reference point may be £1000.

- Choose between A—a small certain gain and B—a larger uncertain gain; with same EV

- … standard risk aversion predicts choosing B

- In KT1, your reference point may be £2000.

- Choose between C—a possible large loss and D—a certain smaller loss (with same EV)

- … standard risk aversion predicts choosing D

- But if feeling of loss is very painful, may choose C>D to have a 50% chance of avoiding pain

Note: This may help explain why some problem gamblers incur larger and larger losses to try to ‘make up’ for bad performance earlier in the day.

Source: Nicholson and Snyder, 2012

- Framing effect

- The same choice, presented in two different ways, may lead to different decisions.

Allais redux: loose prospect theory (Loss Aversion) explanation

Note: We previously mentioned misweighting probabilities as an explanation for this.

An alternative explanation could be loss-aversion

- A: 89% chance of £1,000, a 10% chance of £5,000, 1% chance of 0.

- B: £1,000 with certainty.

- C: 89% chance of 0 and 11% chance of £1,000.

- D: 90% chance of 0 and 10% chance of £5,000.

- Considering A & B, the ref. point may be close to £1000, as this can be had with certainty.

- A seem to ‘risk a costly loss of £1000’, thus may choose ‘safer’ \(B>A\), notwithstanding its far lower EV (£1000 vs £1390)

- Considering D & C, ref. point may be close to 0, as EV’s of each are low, & 0 is likeliest outcome

- So ‘don’t worry about losses’, choose D > C bc higher EV (£500 vs. £110)

- Some sense of the evidence ‘for behavioral economics’ in general

- Evidence on voluntary public goods provision and charitable giving (lab, field, etc) and factors leading to greater provision of each

- Relevance of ‘behavioural biases’ for public policy and business

Evidence in general, some helpful (easier) readings

DellaVigna, Stefano. “Psychology and economics: Evidence from the field.” Journal of Economic literature 47.2 (2009): 315-372.

“EAST: Four simple ways to apply behavioural insights” (BIT, 2014)

Also see popular books and web-tools by Dan Ariely (‘Arming the Donkeys’), Richard Thaler, etc.

Yudkowski’s blogs \(\rightarrow\) ‘From AI to Zombies’

Fairness, social preferences

Discussion of fairness from ‘Golden Balls’ game show – see HERE and links provided

Meier (2009). “A Survey of Economic Theories and Field Evidence on Pro‐Social Behavior”

List, John A. “Social Preferences: Some Thoughts from the Field.” Annual Review of Economics, vol. 1, 2009, pp. 563–579

Bowes and Gintis “Social Preferences” chapter in “A Cooperative Species”

Public goods contributions

- Chaudhuri, 2009. Sustaining cooperation in laboratory public goods experiments: a selective survey of the literature

Time-inconsistency

- Ashraf, N. et al. (2006) “Tying Odysseus to the mast: Evidence from a commitment savings product in the Philippines”

On charitable giving:

innovationsinfundraising.org: A collaborative wiki guide to the evidence on practical influences on charitable giving

Behavioural Insights Team ‘Applying behavioural insights to charitable giving’

Ideas42: Behavior and charitable Giving

Some of my projects:

Giveifyouwin.org: Discussion of loss aversion, other biases, in the context of charitable giving …

innovationsinfundraising.org: Includes a more general discussion and survey of the literature

List (2011). Econ Perspectives or List (2008, ExpEcon), “Introduction to field experiments in economics with applications to the economics of charity”

Allais paradox

Writeup in Wired magazine HERE

Yudkowski on the Allais paradox … and

Huck, Steffen, and Wieland Müller. “Allais for all: Revisiting the paradox in a large representative sample.” Journal of Risk and Uncertainty 44.3 (2012): 261-293. APA

See also Misweighting probabilities …many useful readings on LessWrong.org