2.2.2 Content

This section will go through the relevant O-R text material and a bit more. However, you should read the relevant sections of O-R alongside this.

What can we reasonably say about ‘what people prefer and choose’? How can we state this carefully and precisely? What are the implications of particular ‘reasonable’ assumptions? (How) can we consider this in terms of ‘optimization’? How have Economists and decision-scientists considered these questions?

Digging deeply into the logic and justifications for these models of choice, and alternative frameworks, will be very relevant in considering Behavioral Economics (This is a specialty of Ariel Rubinstein, one of the O-R text’s two authors, and also a research interest of mine). In fact, this text works behavioral economics in from the beginning.

This is also relevant for building empirical models such as ‘models of consumer choices and preferences’ in ways that are relevant to (e.g.) business-data-analytics consulting.

We approach this in an abstract and logically and mathematically-rigorous, but not highly ‘computational’ way. Even if you have taken Economics as an undergraduate, you may not have seen this approach.

We will not always be quite so mathematically rigorous throughout the module. However, I want to at least give you some of these tools, and a ‘flavour’ of this approach, which characterises modern Microeconomics (and will be at the core of PhD modules).Main reading (largely integrated into web book):

Note: Within O-R chapter 1, feel free to skip the proof of propositions 1.1 (‘representing preferences by utility functions’) and 1.2 (‘Preference relation not represented by utility function’)

Alternative treatments for some of this material (read this if you need a different approach than the ones O-R are taking)… unfold

Here is a version we can write dropbox notes in

AUT: Lecture 3 - Axioms of Consumer Preference and the Theory of Choice (note, slightly different notation)

NS: Ch 2

Supplementary and conceptual readings (please read at least some of these):

D. Colander ‘Edgeworth’s Hedonimeter and the Quest to Measure Utility’’ Journal of Economic Perspectives, Spring 2007: 215-225.

‘Predicting Hunger: The Effects of Appetite and Delay on Choice’; Read and van Leeuwen, 1998 … and related work… worth getting a sense of this, particularly in the context of the Sugden (2015) reading, such as Kahneman and Richard H. Thaler. 2006. “Anomalies: Utility Maximization and Experienced Utility.” Journal of Economic Perspectives, 20 (1): 221-234

This section will go through the relevant O-R text material and a bit more. However, you should read the relevant sections of O-R alongside this.

Note that this excerpts and mainly follows O-R chapter 1. I try to highlight the key points, explain them a bit more, and add some additional content that seems important. You must read that chapter (except for the sections I note you may skip) along with these notes. All quotes are from O-R unles otherwise noted.

This section considers preferences separately from choices. Later, we will consider choices as potentially being made according to one’s preferences subject to one’s constraints.

However, behavioral economics also considers the possibility (and ways in which) individual might make choices that in fact to not optimise, even given their own preferences.

We now consider the very very fundamental building blocks behind the (in)famous ‘neoclassical economics model.’ If you accept these ‘axioms’ nearly everything flows from it! We will also consider alternative assumptions influenced by behavioral economics.

Please feel free to type some thoughts in the Hypothes.is sidebar, to engage discussion on this.

You can find another ‘idea-stimulating’ exercise referenced in the O-R book, the questionnaire they give at http://gametheory.tau.ac.il/exp11/. It’s worth doing as they refer to it later in several parts of the book.

Consider…

Consider a decision you recently made? Define this decision clearly; what were the options? How do you think you decided among these options?

What did this depend on? Would other people in your place have made the same decision?

If you got amnesia and forgot what you decided and then were in the same situation again. Do you think you’d make the same decision?

Figure 2.1: Am I blowing your mind here?

Next, suppose I asked you: ‘State a rule that governs how people do make decisions’…

I want this rule to be both

Informative (it rules out at least some sets of choices) and

Predictive (people rarely if ever violate this rule).

Now suppose I asked an apparently similar question:

‘State a rule that governs how people should make decisions’..

By ‘should’ I mean that they will not regret having made decisions in this way.

If people did follow these rules, what would this imply and predict?

Economists (and decision theorists/decision scientists) have specifically defined such rules in terms of ‘axioms about preferences’

They have started from these ‘reasonable axioms’ and followed their logical implications for individual choices, individual responses to changes in prices and income, market prices and quantities and their responses, ‘welfare’ and inequality outcomes for entire economies, etc.

The ‘Standard axioms’ (imply that) choices can be expressed by ‘individuals maximising utility functions subject to their budget constraints’.

This yields predictions for individual behavior, markets, etc. We will get to this.

We want to develop a model that can be used to show how we make choices or decisions.

In the (neo) classical economics framework, your (optimising) choices are determined by two things:

Preferences: what goods do you like?

Constraints: how much money do you have, what are the prices of the goods you buy?

Some more thoughts on ‘why are we learning this strange stuff?’ (unfold)

For the classical Economics model, the work on preferences seems (to me) mainly as largely a matter of ‘finding a consistent justification for our models of utility and choice’. If you already find these models plausible, perhaps this is not so interesting.

However, I see these details as particularly relevant for a few reasons and in a few contexts.

When we take a step back and look outside the classical model to consider:

1. Group and ‘social preferences’ including preferences over abstract quantities and contexts that nonetheless have real impact on decisions. For example, ‘effective altruists’ and social planners need to consider whether to support charities and policies that may ‘bring more happy people into existence’ against others that do more to ‘improve the happiness of existing people’. The philosophical issue of “population ethics” (see., e.g., Blackorby, Bossert, and Donaldson (1995), and the discussion in this podcast) delves deeply into how preferences can be reasonably defined. … and millions of dollars in donations are potentially at stake.

2. ‘Behavioral economics’ models that consider choices (and perhaps preferences) that are inconsistent in certain ways, and potential affected by ‘frames’.

3. Empirical approaches to modeling choices, including some models such as ‘tree-models’, which may not be easily connected to the standard ‘utility-maximizing’ approach.

Also, this formality sharpens your mind, and makes you better at logical argumentation in your research work, and perhaps also in things like computer programming

O-R give some background

We follow the approach of almost all economic theory and characterize an individual by her preferences among the alternatives, without considering the origin of these preferences.

And unlike many other texts, O-R explain that they are doing this, and, in the examples, give some indication of why this is relevant.

What sort of ‘preferences’ are we considering? Just ‘ordinal’ ones, at least for now:

When we express preferences, we make statements like “I prefer a to b”, “I like a much better than b”, …

In this book [as is standard] the model of preferences captures only statements of the first type… [the ranking]

We can think of an individual’s preferences over a set of alternatives as encoding the answers to a questionnaire. For every pair \((x, y)\) of alternatives in the set, the questionnaire asks the individual which of the following three statements fits best his attitude to the alternatives.

- I prefer x to y.

- I prefer y to x.

- I regard x and y as equally desirable.

These will later be formally encoded as

\(x \succ y\),

\(y \succ x\) (or \(x \prec y\)),

and

… respectively. However, as O-R show, these can all in fact be denoted with the careful use of a single ‘at least as good’ operator.

O-R next discuss ‘binary relations’ and their properties.

A binary relation on a set \(X\) specifies, for each ordered pair \((x , y )\) of members of X , whether or not x relates to y in a certain way.

This is getting abstract, and unless you’ve taken some ‘pure maths’ you may be a bit confused and overwhelmed here. In this case, I will try to help you: the folded content below is a question and answer on this with a student. Beware that this is a digression, so if you already know this stuff, you can skip it.

Q (from student whom I will call ‘Sue’): Not sure the meaning of ‘binary relation’. Does it refer to relationships between two objects?

A: Yes, this is a pure maths concept. Think of a ‘binary relation’ as a computer program that does the following…

For example, we can apply the binary relationship ‘\(R_s\): objects go well together in a sandwich’ to the above…

‘Peanut butter’ \(R_s\) ‘Jelly’ \(\rightarrow\) true

‘Peanut butter’ \(R_s\) ‘tuna fish’ \(\rightarrow\) false

Another binary relation could be ‘\(R_l\): ’the first object is usually larger than the second one.’ Here we would have:

‘Whale’ \(R_s\) ‘Minnow’ \(\rightarrow\) true

‘Minnow’ \(R_s\) ‘Whale’ \(\rightarrow\) false

Sue: Thank you! These examples are great!! I think they would be great to put in the web-book if you are planning to create one.

For example, “acquaintance” is a binary relation on a set of people. For some pairs \((x , y )\) of people, the statement “x is acquainted with y” is true, and for some pairs it is false.

(The English word ‘acquaintance’ basically means ‘has met’ or ‘knows’).

Continuing with these questions and answers … (unfold)

Q: What does the ‘acquaintance’ example mean?

A: To give more specific examples, we could have, for \(R_a\): ‘is aquainted with’:

The following claims seem reasonable for the real world:

Sue \(R_a\) “Sue’s lecturer in the UK” \(\rightarrow\) true

Sue \(R_a\) “Sue’s mother” \(\rightarrow\) true

“Sue’s mother” \(R_a\) “Sue” \(\rightarrow\) true (perhaps the acquaintance relationship is ‘reflexive’)

“Sue’s mother” \(R_a\) “Sue’s lecturer in the UK” \(\rightarrow\) false.

If so, this demonstrate that the real-world relationship ‘is aquainted with’ is not ‘transitive’.

Sue: I was not sure what ‘aquaintance’ meant in this example. Now I do understand!

Another example of a binary relation (unfold Q&A)…

Another example of a binary relation is “smaller than” on the set of numbers. For some pairs \((x,y )\) of numbers, x is smaller than y, and for some it is not.

For a binary relation R, the expression \(x R y\) means that x is related to y according to R. For any pair \((x, y)\) of members of X , the statement \(x R y\) either holds or does not hold. For example, for the binary relation < on the set of numbers, we have \(3 < 5\), but not \(7<1\).

Q: Given that R stands for relation, what does \(xRy\) means? Does it mean the relationship between x and y?

A: It means ‘x relates to y, by the relation R’. If the relation R is ‘greater than’, then \(xRy\) means ‘\(x\) is greater than \(y\)’.

Q: If so, what does yRx mean?

A: It means ‘y relates to x’ …. with the above example it means ‘y is greater than x’.

Sue: Aha!! I see.

O-R begin with the formal definition of ‘at least as desirable as’ (or ‘seen as at least as good as’). In other words,

“x is at least as desirable as y” is denoted \(x \succsim y\)

if the individual’s answer to the question regarding x and y is either “I prefer x to y” or “I regard x and y as equally desirable”.

O-R show, that in fact, all of the preference relationships can be denoted with the careful use of the \(\succsim\) operator. For example:

‘Indifference’:

\[x \sim y \text{ if both } x \succsim y \text{ and } y \succsim x\]

and

‘Strict preference’

\[x \succ y \text{ if } x \succsim y \text{ but not } y \succsim x\].

Why? In pure maths, and perhaps in economic theory, they value stating a concept with the fewest and most ‘primal’ definitions possible.

Technical note: This can help in proving properties of these objects and systems. By characterising something as the simplest mathematical object possible, we can apply all of the properties of that mathematical object that have been proven over the centuries.

Exercise: to be sure you understand these things, close your book/computer and try to reconstruct the construction of strict preferences or indifference from only the \(\succsim\) operator. Can you give the intuition for this, explaining it clearly?

Consider the ‘Venn diagram’ of preferences over x and y from the O-R text (O-R, page 5, fig. 1.1)

Note: Osborne and Rubinstein have requested that we not reprint any figures directly from their book.

Note that the set of preferences where ‘x and y are equally desirable’ is included in both the set ‘x is at least as desirable as y’ and the set ‘y is at least as desirable as x’.

We consider some standard assumptions over preferences that, combined with some other assumptions, will be sufficient to justify using a ‘utility function’.

Other common assumptions (which we will return to when we consider consumer preferences:

‘More is Better’ (‘nonsatiation’ or ‘monotonicity’) type of assumptions.

‘Convex preferences,’ ‘diminishing marginal rates of substitution’ or, in multiple dimensions, ‘strict quasi-concavity’, something you may come across in your maths/optimization module.

In general, axioms like these also stand directly behind ‘revealed preference’ methods for measuring how much people value nonmarket goods, like clean air and national parks.

Completeness (informal definition): “Given two options, A and B, a person can state which option they prefer or whether they find both options equally attractive.”

The following is forbidden: “I cannot choose between a ski holiday and a beach holiday, yet I am not indifferent between these.”

"Why wouldn’t I be able to express a preference? (Consider this for a moment, and then unfold…)

In the case above it seems strange to not be able to express a preference. But for other cases it may be extremely difficult or painful to compare two things. Imagine if you were asked to compare ‘between ’never seeing your mother’ or ‘never seeing your father.’

Alternately, imagine you are asked ‘do you prefer a blargh-colored shirt or a fleem-colored shirt’, where blargh and fleem are colors you have never heard of before.

In general, economists’ standard approach assumes we have a consistent preference ranking over all possibilities, and choose accordingly.

However, other social sciences see this differently.

Aside: Also ‘forbidden’ in the classical model: the time or frame in which I make the decisions must not affect my preferences (or choices). This will be relaxed when we discuss behavioral economics.

Definition: Completeness (From O-R)

Also please see my video above.

The property that for all alternatives \(x\) and \(y\) , distinct or not, either \(x \succsim y\) or \(y \succsim x\) (or both). Even more formally (optional)…

Definition (O-R): Complete binary relation

A binary relation \(R\) on the set \(X\) is complete if for all members \(x\) and \(y\) of \(X\), either \(x R y\) or \(y R x\) (or both).

A complete binary relation is, in particular, reflexive: for every \(x \in X\) we have \(x R x\).

In case you are confused, please unfold the Q & A below…

Q: I am still confused with the concept of ‘reflexivity’ after reading these paragraphs. Does it mean all ‘x’ are the same

A: Not exactly, reflexivity is a property (a characteristic) of the relation \(R\) (where this ‘relation’ could be ‘greater than’, ‘smaller than’, ‘equal’, ‘having the same number of elements’, ‘preferred to’, etc.), not the property of a ‘mathematial object’ itself.

Reflexivity holds where an object always “relates to itself”. For example, in standard maths the relationship ’greater than or equal to (\(\geq\)) is reflexive, while the relationship \(\gt\) is not reflexive. For any number \(x\) the statement \(x \geq x\) is true (e.g., \(10 \geq 10\) is true because \(10=10\)). However, the statement \(x>x\) is false … obviously a number cannot be greater than itself! (Only the Red Queen can exceed her own greatness.)

Sue: Hmmmm, why is \(10 \geq 10\) true??? How could 10 possibly be greater than 10? Shouldn’t the only correct statement be 10 = 10?

DR: Because this is the ‘greater than or equal to’ sign. Of course ‘10 is greater than or equal to 10’ because they are equal. Just as in computer programming, with an ‘OR’ operator the condition evaluates as true if either (or both) of the conditions are true.

Sue: Ooook, I think I understand it now. It makes sense to me when you linked it with computer programming; but what is the point of the \(\succsim\) sign? Couldn’t the authors only use the \(\geq\) sign?

DR: This is in fact a notation from set theory. We are expressing preferences over objects which may not be representable by a single number.

Sue: … But then why ‘completeness’ automatically ensures the second property ‘reflexivity’?

DR: The authors are saying that the first property of the binary relationship, ‘completeness’ automatically ensures the second property ‘reflexivity’. Equivalently (contrapositive statement) “a relationship that is not reflexive can not be complete.”

But they have only stated that this is the case. They have not PROVEN it. We would have to look up a proof or try to construct it ourselves. They don’t give the proof of this in the main text.

For a binary relation \(\succ\) to correspond to a preference relation, we require not only that it be complete, but also that it be consistent in the sense that if \(x \succsim y\) and \(y \succsim z\) then \(x \succsim z\). This property is called transitivity. (O-R)

Intuitively: Transitive preferences are ‘internally consistent’: If I prefer A to B, and prefer B to C, then I must prefer A to C.

Formally definition of ‘transitive binary relation’ (optional)

Definition: Transitive binary relation [O-R]

A binary relation R on the set \(X\) is transitive if for any members \(x\) , \(y\) , and \(z\) of \(X\) for which \(x R y\) and \(y R z\), we have \(x R z\).

Let’s explore this more simply in terms of strict preferences, the \(\succ\) symbol.

Transitivity means:

\[ A \succ B \: and \: B \succ C \rightarrow A \succ C \]

If I prefer an Apple to a Banana and a Banana to Cherry then I prefer an Apple to a Cherry.

A similar idea holds if I am indifferent between one pair of these. (\(\sim\))

The transitivity of the ‘indifference relation’ \(\sim\) is a general property of ‘equivalence relations’.

\[ A \succ B \:\: and \: \: B \sim C \rightarrow A \succ C \] etc.

We may write this concisely as \(A \succ B \sim C\).

If this seems confusing it may be because it is too obvious (although behavioral and experimental economists claim to find violations of this, as we shall see).

If you found someone who striclty preferred an apple to a bananas, a banana to a cherry, and a cherry to an apple, you could make a lot of money out of them!.

Consider: How would you do this? (Unfold below for a description).

It works as follows (being a bit informal here):

Obtain (or borrow) an apple, a banana and a cherry.

Offer to give this person an apple in exchange for their banana plus a tiny little extra small unit of banana (or ‘money’).

Next offer them a cherry for their apple plus a bit extra.

Next offer a banana for their cherry plus a bit extra.

… Keep repeating steps 1-3, until you’ve drained them of all of their resources.

DR #TODO: I intend to add a video here discussing this

Violations of transitive preferences? (Unfold)

Violations of transitivity? see…

Loomes, Graham, Chris Starmer, and Robert Sugden. “Observing violations of transitivity by experimental methods.” Econometrica: Journal of the Econometric Society (1991): 425-439.

Choi, Syngjoo, et al. “Who is (more) rational?.” The American Economic Review 104.6 (2014): 1518-1550.

We will return to this after we consider choice.

Feel free to skip the O-R discussion of symmetric and anti-symmetric relations, and also skip the discussion of symmetric/antisymmetric relations, if you want.

It is fun, but it is barely used later in the text.

Note: I define continuous preferences here, while O-R do not define this until the chapter ‘Consumer Preferences’. I’m not sure why they made that choice. Among other things, continuity is relevant for considering which preferences can be represented by a utility function.

What is continuity? One definition:

Continuity: If \(A \succ B\) and \(C\) ‘lies within an \(\epsilon\) radius of \(B\)’ then \(A \succ C\).

More completely, O-R define continuity as:

The preference relation \(\succsim\) on \(\mathbb{R}^2_{+}\) is continuous if whenever \(x \succ y\) there exists a number \(\epsilon> 0\) such that for every bundle \(a\) for which the distance to \(x\) is less than \(\epsilon\) and every bundle \(b\) for which the distance to \(y\) is less than \(\epsilon\) we have \(a \succ b\) (where the distance between any bundles \((w_1, w_2)\) and \((z_1, z_2)\) is \(\sqrt{|w_1 − z_1|^2 + |w_2 − z_2|^2}\)

Discussion of continuity, and sufficient conditions for a utility representation of preferences:

We might derive preference relations from a few “basic considerations”, perhaps reflecting how people describe their own preferences (and choices) in conversation.

These can be considered in terms of individual preferences, or, as we will see later, ways to express group-preferences or social preferences. This is considered the ‘axiomatic approach’.

We will consider a few examples, some of which do satisfy completeness and transitivity, and some of which do not satisfy one or both of these.

O-R first consider a ‘value function’ “\(v\) that attaches to each alternative a number” … the preference relation here "is defined by \(x \succsim y\) if and only if \(v(x) \geq v(y)\).

This is close to the ‘utility function’ that we will discuss later. It is trivial to show that this is complete and transitive. (Can you show it?).

“If and only if” is sometimes depicted as \(\leftrightarrow\) (and abbreviated ‘iff’).

When we say “statement A holds if and only statement B holds”, we mean that whenever statement B holds statement A must hold, and also, whenever statement A holds statement B must hold. This also implies that if statement A does not hold, statement B cannot hold and vice versa.

Another way of saying this is “statement A is a necessary and sufficient condition for statement B.”

For example, in simple algebra we can assert (and prove):

\(x > y\) if and only if \(2x > 2y\), i.e., \(x > y \leftrightarrow 2x > 2y\),

To prove the ‘if and only if’ relationship, you need to prove both directions of this relationship. For this example, I would need to show both:

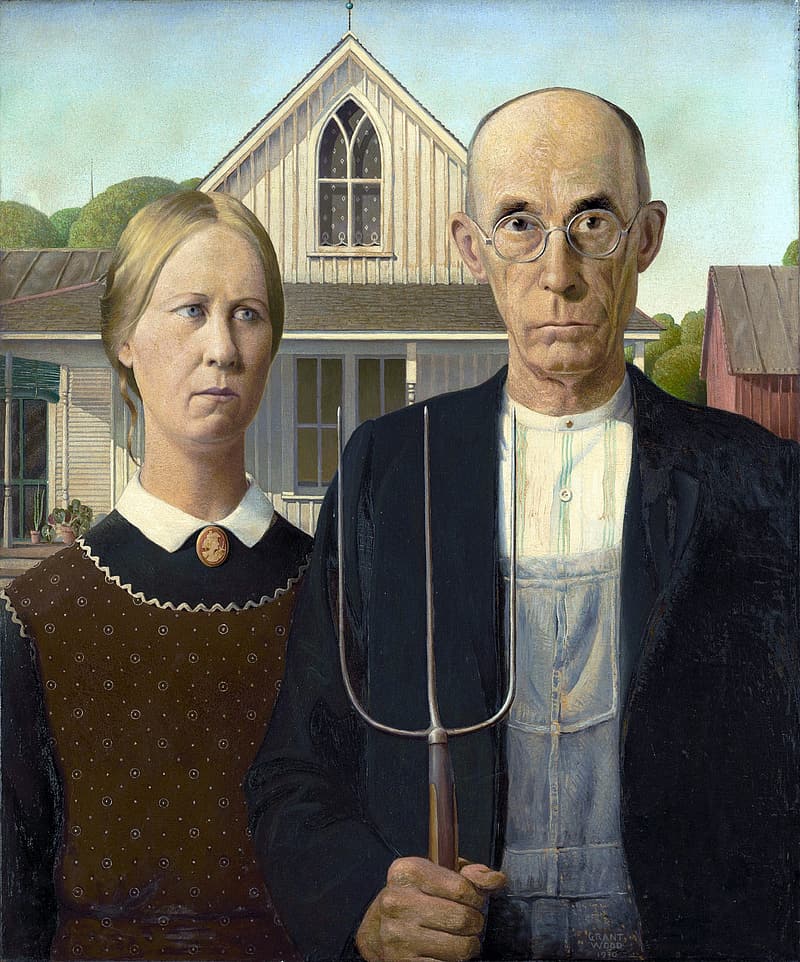

Figure 2.2: American Gothic, by Grant Wood

Distance function is a type of value function

Maybe you have heard someone say something like:

I know what I like; there is a perfect life for me. I simply want everything to look as close to this as possible.

O-R: “One alternative is “ideal” for the individual; how much he likes every other alternative is determined by the distance of that alternative from the ideal, as given by a function \(d\). "

This might be expressed as the preference relation \(\succsim\) defined by

\[x \succsim y \: \leftrightarrow \: d(x) \leq d(y)\]

We will return to “distance functions” later. We are all familiar with the ‘Euclidean distance’ function… which happens to represent the actual straight-line distance between two physical objects in three dimensional space. However, there is no obvious single measure of the “distances between two bundles of goods and services, with many measurable attributes.” This issue also comes up in analysis of real considering (e.g.) consumer preferences over different products a firm could manufacture.

Note that the above is, in fact a value function, with the ‘value of alternative x’ expressed as \(v(x)=-d(x)\)

Negative because ‘the further from the ideal’, the worse it is.

Remember the Blue Cat in the video? … When he considered his preferences over the teas, he said:

I care first and foremost about whether the tea box is rectangular. If several boxes are equally rectangular, then I care about which box is the most white. If the boxes are equally white, then I care about which box is biggest.

This seems to be an example of what we call ‘lexicographic preferences’.

Why are lexicographic preferences important? (Think and unfold:)

Ask people how they make their choices, particularly when they are shopping online. I bet that some people will describe a process of ‘ruling out’ one set of options after another. This is, in fact an optimal procedure for someone with lex preferences. This is not optimal for someone with the more standard preferences you may have seen in economics courses, someone who considers trade-offs between one attribute and another.

The ‘filtering’ is also consistent with the standard way data is organised. Try to search for a product or service online (on Amazon, or Kayak): it is hard to specify your trade off between different characteristics, but it’s easy to ‘narrow things down’ one category at a time.

In business data science, some ‘tree-based’ models of choice implicitly assume lex preferences. Business marketers speak of ‘hygiene factors’; if these are not achieved, the customer will walk away.

DR #TODO: I intend to add a video or audio content discussing the above, perhaps ‘going online’ or with a ‘vox pop’.

As stated by O-R:

An individual has in mind two [or more] complete and transitive binary relations, \(\succsim_1\) and \(\succsim_2\), each of which relates to one feature of the alternatives. For example, if \(X\) is a set of computers, the features might be the size of the memory and the resolution of the screen. (O-R, p. 7)

DR Todo: I intend to add a video here discussing this with a numerical example

Lexicographic preferences can be applied to any number of features, but we will only consider two features here.

The individual gives priority to the first feature, breaking ties by the second feature. (O-R, p. 7)

Formally, [for lexicographic preferences] the individual’s preference relation \(\succsim\) is defined by \(x \succsim y\) if:

- \(x \succ_1 y\) or

- \(x \sim_1 y\) and \(x \succsim_2 y\) .

From now on, I may use the abbreviation ‘lex preferences’ to mean ‘lexicographic preferences’.

Are lexicographic (lex) preferences complete?

Its completeness follows from the completeness of \(\succsim_1\) and \(\succsim_2\). (O-R, p. 7)

If we suppose that the ‘sub-preference orderings’ \(\succsim_1\) and \(\succsim_2\) can be applied to these features of any alternative…

then we can apply the procedure above to determine the overall preference ordering \(\succsim\) between any two pairings.

DR Todo: I intend to add a video here covering this

Suppose that \(x \succsim y\) and \(y \succsim z\).

To show transitivity by our definition, we now need to show that this neccesarily implies that \(x \succsim z\) holds.

Also note that the above example happens to be perfectly general (we sometimes state this as ‘without loss of generality’ or ‘WLOG’). As we have complete preferences, for any three options, it must be that you can choose labels for them x, y, and z, such that \(x \succsim y\) and \(y \succsim z\).

There are (only) two possible cases:

Note that these are the only two possible cases. (We know this because case 2 is simply the ‘negation’ of case 1. In standard logic either something is true, or it is not true). Thus if we can prove transitivity for both case 1 and case 2, we have proved it ‘for all possible cases’.

Case 1: “The first feature is decisive when comparing \(x\) and \(y\): \(x \succ_1 y\).”

Case 2: “The first feature is not decisive when comparing \(x\) and \(y\)…”

Considering Case 1:

Given, by the above assumption, that \(y \succsim z\), it must be that \(y \succsim_1 z\)

… i.e., y is “at least as good as z in its first feature” – otherwise, according to our preferences, y could not be ‘at least as good as z’).

so by the transitivity of \(\succsim_1\) [which was part of the definition of lex preferences]…

we obtain … \(x \succ_1 z\)

and thus \(x \succsim z\)

Wait, why does \(x \succ_1 y\) combined with \(y \succsim_1 z\) imply that \(x \succ_1 z\)? (think, and then unfold).

This seems intuitively obvious: if ‘feature 1 of x is strictly better than feature 1 of y’ and ‘feature 1 of y is at least as good as feature 1 of z’, then it should be that ‘feature 1 of x is strictly better than feature 1 of z’. But if we are compulsive mathematicians, we would need to actually formally prove this, as Problem 1b requests.

… And why does \(x \succ_1 z\) imply that \(x \succsim z\)? (think, and then unfold)

By condition (i) in the definition of lex preferences, if \(x \succ_1 y\) then \(x \succsim y\). (Obviously, as this is a general definition, it also applies if we swap z for y in this statement.)

…"but wait, why aren’t you stating \(x \succsim z\) (‘x is at least as good as z’)… don’t we know that \(x \succ z\), (‘x is strictly preferred to z’)? (unfold)

Three responses:

For this case, \(x \succ z\) does not come directly out of the definition of lex preferences. (I think this could be derived, but we do not need to.)

Our definition of transitivity involves the \(\succsim\) operator, and not the \(\succ\) operator; we need to prove it precisely as defined.

Anyways, \(x \succ y\) implies \(x \succsim y\) (but not vice versa). \(\succsim\) is the ‘weaker’ condition. So if \(x \succ y\) is a true statement then \(x \succsim y\) is as well (always).

Wait we are not done! We only proved this for one case; now we must consider the ‘negation’ of this case:

Considering Case 2… : "The first feature is not decisive when comparing \(x\) and \(y\): \(x \sim_1 y\)

…(and \(x \succsim_2 y\) must hold as we assumed that \(x \succsim y\) overall.)

Case 2 is further broken down into two comprehensive sub-cases, which I will call 2a and 2b. Given that the first feature is not decisive for comparing x and y…

…then either the first feature is decisive when comparing y and z (‘case 2a’), or it is not (‘case 2b’).

Either…

2a: \(y \succ_1 z\)

… then … “we obtain \(x \succ_1 z\) and therefore \(x \succsim z\).”

O-R write that this follows “from the transitivity of \(\succ_1\)”. I believe that here they mean we know that if \(A \sim B\) and \(B \succ C\) then \(A \succ C\) by the property of these logical relations \(\sim\) and \(\succ\).

Thus we must have that \(x \sim_1 y\) and \(y \succ_1 z\) implies \(z \succ_1 z\)

or

2b: \(y \sim_1 z\)

implying that \(y \succ_2 z\) for y to be preferred to z overall, as assumed.

By the transitivity of \(\sim_1\) we obtain \(x \sim_1 z\) …

(recalling \(x \sim_1 y\) is what defined case 2)…

and by the transitivity of \(\succsim_2\) we obtain \(x \succsim_2 z\). Thus \(x \succsim z\).

Thus we have shown, for all possible cases that, or lexicographic preferences \(\succsim\) as defined above, \(x \succsim y\) and \(y \succsim z\), implying that these preferences are transitive.

We can now write quo erat demonstrandum or ‘Q.E.D’, meaning ‘what was to be demonstrated’ [has been demonstrated]. This is sometimes expressed with the symbol

\[\blacksquare\].

Why did we do this??

Gee, this took a long time.

Was it a fun puzzle or a tedious chore? Why did I show you this?

(Unfold the box below for my thoughts on this.I think it is good for you to get a sense of what mathematical/theoretical economics proofs look like. It’s good for improving the rigorous structure of your arguments in general. It may be helpful for future reading. It helps you be sure that you understand the concepts defined.

Also, we learned a few concepts here in doing this. I would suggest that we learned things like…

A proof can constructed by demonstrating that something must hold for all possible cases. We can do it by dividing up the space of all possible cases and showing it for each part of this space.

The idea of “weaker and stronger conditions” (strict vs weak preference)

How to use ‘general properties of mathematical objects’ (like the transitivity of the \(\succsim\) operator) to construct proofs for specific cases.

Mathematical and logical arguments often contain many simple steps, perhaps too many to keep in your head all at the same time. That’s why it’s important to write things down and not try to do everything in ‘spoken form.’

The individual has in mind \(n\) considerations, represented by the complete and transitive binary relations \(\succsim_1, \succsim_2,..., \succsim_n\).

For example, a parent may take in account the preferences of her \(n\) children.

In the movie (and book) “Sophie’s choice” a mother is asked to make a horrible choice very disturbing clip here. This may be a case in which we think preferences are not ‘complete’; we cannot express preferences among our children’s lives.

Or, getting back to our basic idea, an individual may simply have preferences over \(n\) characteristics of an outcome (or a product, or a bundle of products)

Define the binary relation \(\succsim\) by \(x \succsim y\) if \(x \succsim_i y\) for \(i = 1,...,n\).

In other words, this may be is a person who says something like:

I only prefer a holiday in Jamaica to a holiday in France if Jamaica is better than France in all attributes (or vice-versa). Otherwise I can’t say anything about it.

Or a group policy-maker who says (unfold):

Group PM: “I can only state that we prefer the Conservatives’ policy is better than the Liberals’ if it is better for everyone in our society (or vice-versa). Otherwise, we don’t have a group preference.”

In fact, these precise definitions of preferences, and their characteristics, are useful in considering the idea of ‘public choice’ and social welfare functions. We may derive things like ‘impossibility theorems’ that tell us the limits of what group-decision-making can accomplish.

O-R discuss this in chapter 20, ‘Aggregating preferences’, but we probably won’t have time to cover it directly in this module.

Unanimity rule, properties:

This binary relation is transitive but not necessarily complete.

Specifically, if two of the relations \(\succsim_i\) disagree (\(x \succsim_j y\) and \(y \succsim_k x\), then \(\succsim\) is not complete.

How do we know it’s transitive? (unfold next section)

Informally ’If X is at least as good as Y in all aspects, and Y is at least as good Z in all aspects, then X is at least as good as Z in all aspects,

How does the example in the prior fold show that it’s not complete? (unfold below)

DR Todo: I intend to add a video here discussing this

If ‘two of the relations disagree’ when comparing any two elements in the choice set (x and y), then the condition ‘x is at least as good as y in all aspects’ does not hold, nor does ‘y is at least as good as x in all aspects’ hold. So the unanimity preference relation ‘rule’ does not state that either is preferred.

Maybe this is obvious when you think in the group context: If we can only make a decision if everyone agrees that one option is better than the other, there will be cases where we cannot say either way which is preferred.

The ‘unanimity rule’-based preferences are not complete, which suggests this has limited use as a decision rule (when we get to actual ‘choice’).

‘Majority rule’ (read about this in O-R) solves this problem but brings another problem, which leads to the ‘Condorcet paradox’, also known as the ‘voting paradox’. After reading this in O-R, unfold below for an example of how this makes it difficult to clearly express ‘public choice’ through majority voting.

Voting paradox example

Suppose we are trying to choose, as a society, between three options in our choice set \(X\): a Green Park, Public Housing, or Private Housing.

Suppose we have a local council with one member from each party (representing equal-sized constituencies). Preferences of these groups are as follows (considering strict preferences; as O-R state, the preferences here are ‘anti-symmetric’):

Green party: Green Park \(\succ\) Public housing \(\succ\) Private housing

Labour: Public housing \(\succ\) Private Housing \(\succ\) Green Park

Conservatives: Private Housing \(\succ\) Green Park \(\succ\) Public housing

Suppose we have a local council with one member of each party (representing equal-sized constituencies).

Which proposal would win if they voted on: - a Green park versus Public housing? - a Green Park versus Private housing? - Private housing versus Public housing?

Try each vote and see who wins.

Does a majority vote reveal a clear ‘consistent social preference’?

No, not here.

In the O-R questionnaire they asked people to make 36 choices between pairs of alternatives taken from a set of 9 alternatives.

Each alternative is a description of a vacation package with four parameters: the city, hotel quality, food quality, and price.

They varied the ordering of the description of these features.

e.g., one choice could be between

They found that 85% of the roughly 1300 showed at least one ‘intransitivity’, where their stated preferences implied “A is preferred to B”, “B is preferred to C”, and “C is preferred to A” for some combination of three alternatives.

Or they simply reversed themselves, stating ‘A is preferred to B’ in one case and ‘B is preferred to A’ in another case. That is also a violation of transitivity, of course.

They also note that

the order in which the features of the package are listed has an effect on the expressed preferences.

This is something we call a ‘framing effect’; the way an option is stated affects people’s choices (or stated preferences).

This is a major discussion in behavioral economics. Framing effects include things like ‘anchoring’, ‘halo effects’, ‘decoy effects’, etc. (Look up what some of these mean if you are interestef). Framing effects might be interpreted as a violation of the idea of ‘transitive preferences’. On the other hand, one might argue instead that ‘the frame is itself a characteristic of the product.’

This may be suggestive evidence, but I don’t think it should be considered definitive.

One possible objection… These were ‘hypothetical’ questions; no one was actually making real choices here. In contrast, Economics research usually emphasizes real ‘incentivised’ choices, either in lab or field experiments or relying on ‘observational’ data. Thinking about these choices carefully is mentally costly. Respondents may not have put in the time to carefully consider these choices.

Video: “A utility function represents preferences”

Recall, a ‘value function’ simply assigns a real number to each alternative. A Utility function is simply a value function which ‘represents preferences’.

Utility function (O-R definition)

For any set \(X\) and preference relation \(\succsim\) on \(X\), the function \(u : X \rightarrow \mathbb{R}\) represents \(\succsim\) if

\[x \succsim y \text{ if and only if } u(x) \geq u(y)\] We say that \(u\) is ’a utility function for \(\succsim\).

\(u : X \rightarrow R\) means that the function \(u\) takes any element in the set \(X\) (perhaps each element is a combination of goods, services, and leisure time) and returns a real number.

To make this concrete…

Can you construct a utility function that ‘represents’ preferences \(a \succ b \sim c \succ d\) on the set {a,b,c,d} (Unfold)One such utility function (O-R page 9)

\(u(a)=5\), \(u(b)=u(c)=-1\), \(u(d)=-17\).

Of course many other utility functions also represent these preferences.

There is some debate about the meaning and interpretation of utility, particularly in the Behavioural Economics literature

Although the NS text describes it as “The pleasure or satisfaction that people get from their economic activity” I think that most economists do not see it that way.

Perhaps the modern non-behavioral definition of utility would be ‘the thing that drives choices, subject to constraints’.

So if utility is (at best) defined as ‘the thing that people maximise’, does it have any meaning, or does it simply lead to circular reasoning?

(My thoughts in the fold).

My opinion is that there is some ‘bite’.

A defining feature of the standard Economics approach is that decisions are made as if all characteristics of outcomes can be compared and evaluated, thus we can reduce everything to a single dimension, ‘utility’, which is maximised. Other social sciences often reject this.Also note that behavioral economics often separates preferences from choices, as in the O-R text, and some models even separate ‘choice-utility’ from ‘realized utility.’

Consider: Measuring and comparing utilities (unfold)

In general, we don’t imagine that we can somehow measure an individual’s actual ‘amount’ of utility. To the extent that we can measure it at all, it is only inferred indirectly, from the choices people make.

See D. Colander ‘Edgeworth’s Hedonimeter and the Quest to Measure Utility’’ Journal of Economic Perspectives, Spring 2007: 215-225 for a discussion of the history of this idea.

So utility is not ‘directly observable and measurable’.

Unlike midi-chlorians or thetans.

Utility is seen to govern an individual’s choices and thus only inferred indirectly, from the choices an people make.

Consider: Revealed preference:

if Al buys a cat instead of a dog, and a dog was cheaper, we assume Al gets more utility from a cat

Figure 2.3: Al and his cat

Such methods are used to try to measure the value of goods that have no observed market price, for public policy choices.

E.g., If people drove 20km to go to parK B, they must value it at at least the cost of driving there.

If people pay £20k more for a house with a view of the lake (ceteris paribus), or in a neighborhood with better air quality…

This raises another issue: can we compare whether one person gets ‘more’ or ‘less’ utility than another? Can we know whether a policy can “increase one person’s utility more than it decreases another person’s utility”? These issues are considered in modeling social welfare and social choices.

In more detailed discussions, we might speak of ‘cardinal utility’, ‘ordinal utility’.

Empirically, it is hard to imagine how these could be measured: if utility is only indirectly and partially observable through an individual’s choices… when do we observe choices that trade off between one person and another in ways that reflect the ‘sum of their utilities’?

Never, I think.

Here O-R give a fancy definition of ‘maximal element with respect to \(\succsim\)’ … basically, the ‘best or (at least tied-for-best) element in the set, considering these preferences.’

Similarly for the ‘minimal’ element.

O-R Lemma 1.1 … in any ‘nonempty finite set’ there must be at least one minimal and at least one maximal element. This is pretty obvious and intuitive.

Still, it is worth reading over the proof of this as it gives you an easy example of ‘proof by induction’. The logic of ‘proof by induction’… (to prove that something holds for a set of any countable size \(N\)):

Aside: is there a ‘smallest interesting integer number’? Can you prove why or why not?

No, there cannot be, I can ‘prove it’. Suppose there that \(K\) were the ‘smallest interesting integer’. But then integer \(K-1\) would be the ‘largest non-interesting number.’ That fact alone should make it interesting! Therefore, there cannot be any smallest interesting integer!

(I think this passes for a joke in the maths community.)

The following proposition, and other propositions extending this, are fundamental to the idea that we can conduct our economic analysis as if people actually consulted a utility function.

Proposition (O-R 1.1): Every preference relation on a finite set can be represented by a utility function.

Note that this requires a finite set; this must involve a countable number of elements to choose among. It is not guaranteed (at least not without further conditions) for a preference relation on an infinite set. For example, the set of elements ‘any real number between 0 and 10’ is an infinite set.

You may skip the proof of this proposition, if you like.

Lexicographic preference on a finite set (like the set of seats in a movie theatre) can be represented by a utility function,

Following O-R example 1.2:

Please view figure 1.3 from O-R (page 12).

Osborne and Rubinstein have requested that we not reprint any figures directly from their book.

DR Todo: I hope to add a video here discussing this

Just rank the seat preferences, and assign the lowest utility number to the least-preferred seat (most front and right). Then increase the utility by 1 as you move each seet to the left. Then start at the next row back, all the way to the right, adding 1 to the utility, etc.

O-R give another (equivalent) utility function for this, noting that, for this example there are 40 rows of seats and 50 columns (50 seats per row).

\[u(x)=50 r(x) - c(x) + 1\]

where \(r(x)\) is the row number and \(c(x)\) the column number. (I’m not sure why they added the ‘+1’, there is no need for a utility number to be positive.)There are ‘important’ economic theorems specifying ‘sufficient conditions for preferences to be represented by a utility function’. We will consider this briefly.

‘Continuous preferences’ (defined above) as well as completeness and transitivity \(\rightarrow\) a utility representation; these are jointly sufficient conditions for preferences to be represented by a utility function. Continuous preferences will also ensure continuous utility functions and allow us to discuss concepts such as ‘marginal utility’ and ensure and gradually sloping demand curves.

Note that everywhere-differentiable utility functions are continuous.

However, ‘lexicographic’ preferences are not continuous and, on an infinite set, they cannot be represented by a utility function (see video below).

For example, consider the infinite set \(\mathbb{R}^+\), the set of all non-negative real numbers. This is a reasonable choice set to consider, perhaps, in some contexts. E.g., ‘I may be able to consume any zero or positive amount of food’ ( note we have not yet brought in constraints, we are just considering preferences).

Suppose ‘I always find more food at least as good as less food’. We could represent this preference as

\(x \succsim y\) if and only if \(x \geq y\)

This is easily represented by the utility function \(u(x)=x\).

… or in fact, by any utility function which is an increasing function, such as \(u(x)= x^2 + ln(x) + 108\)

In contrast, consider lex preferences on an infinite set, the ‘unit square", where the first priority is the first coordinate and the second priority is the second coordinate. This is like the ’movie theatre’ example, except instead of specific seats, one can locate at any exact point in the square.

See O-R page 13, figure 1.4, for a depiction of lex. preferences within in a square.

so that, for example, \((0.3,0.1) \succsim (0.2,0.9) \succsim (0.2,0.8))\)

O-R show and then ‘prove’ (alluding to a more general proof), that:

Notation: The ‘{}’ enclose the definition of the set. “\((x_1,x_2)\)” means we are talking about sets of pairs of elements. The colon (“:”) is read as ‘such that’. “\(x_1,x_2 \in [0,1]\)” means that each of these elements must be greater than or equal to 0, and less than or equal to 1. The brackets (“[]”) specify a ‘closed set’ including these boundaries.

The (lexicographic) preference relation \(\succsim\) on \(\{(x_1,x_2) : x_1,x_2 \in [0,1]\}\) ….

defined by \((x_1,x_2) \succ (y1, y2)\) if and only if either (i) \(x_1 > y_1\) or (ii) \(x_1 = y_1\) and \(x_2 > y_2\) is not represented by any utility functiom

You may find it more worthwhile to ‘try and fail’ to give a utility function depicting such preferences. Of course, no matter how many times you fail, you have not proved that it is ‘impossible’. But it may help you gain intuition.

You can skip the proof that follows this.

O-R say ‘Increasing function of a utility function is a utility function’. But it’s more than this. It is the same utility function, yielding the same choices.

* Note that I say ‘regular utility function’. When we consider uncertainty in the ‘expected utility’ framework, this will not hold with respect to ‘increasing transformations’ of the functions we will be dealing with, only with respect to ‘affine (linear) transformations’.

…Or dropbox link HERE

Wherein I explain

*

* Note in the above video I equivocated over whether unanimity preferences can be represented by a utility function. Such preference are not complete, so they cannot be fully represented: where one bundle is better by one criteria and another by a different criteria, we cannot assign utility numbers to either of these bundles.

This is pretty important because it gives us a sense of how closely we might be able to identify a utility function. As O-R note:

if the function \(u(x,y)\) represents a given preference relation then so does the function \(3u(x,y) −7\) [for example]

This means that even if we knew a persons’ preferences, we wouldn’t know whether the utility function was “actually” \(U(Apples, Bananas) = A + B^2\) or \(U(Apples, Bananas) = 4(A + B^2)^3 + 37\) etc. These utility functions express the same preferences.

In general:

The notation here is a bit loose perhaps? When we say \(x \in X\) we are considering elements \(x\) that may have multiple components… \(x\) may be a bundle of goods and services, etc., so \(u(x)\) may be a function of several variables.

O-R Proposition 1.3:

Let \(f : \mathbb{R} \rightarrow \mathbb{R}\) be an increasing function (*). If \(u\) represents the preference relation \(\succsim\) on \(X\) , then so does the function \(w\) defined by \(w(x) = f(u(x))\) for all \(x \in X\).

The proof is pretty quick and reasonable:

\(w(x) \geq w (y)\) means is the same thing as saying \(f(u(x)) \geq f(u(y))\), by the above definition.

Because \(f\) is an increasing function (*), \(f(u(x)) \geq f(u(y))\) holds if and only if \(u(x) \geq u(y)\).

\(u(x) \geq u(y)\) is true if and only if \(x \succsim y\), by the definition of utility functions ‘representing preferences.’* An increasing function is simply a function that increases in its arguments. By definition, \(f\) is an increasing function if and only if \(f(a)>f(b)\) whenever \(a>b\).

At this point, you may want to work through the exercises on preferences and utility. (But not the exercises on choice just yet.)

Note that this follows much of O-R chapter 2. You must read that chapter along with these notes. All quotes are from O-R unless otherwise noted.

Recall … a preference relation refers only to the individual’s mental attitude, not to the choices she may make. In this chapter, we describe a concept of choice, independently of preferences. (O-R, p. 17)

The separation of Preferences and Choice in their text, into separate concepts and chapters, is a bit unusual. I suspect that this reflects how these are often separated in Behavioral Economics; unlike in classical Economics, choices might not simply optimise with respect to an individual’s preferences (and ‘experienced’ utility).

Given a set \(X\), a choice problem for \(X\) is a nonempty subset of \(X\)…

and a choice function for \(X\) associates with every choice problem \(A \in X\) a single member of \(A\) (the member chosen).

(O-R, def 2.1)

This is ‘dense language’. In more plain English, a ‘choice problem’ is a subset of alternatives (perhaps those alternatives which are ‘feasible’ for the individual to choose among).

A ‘choice function’ specifies ‘for any given (sub)set of alternatives, which one will be chosen’?

O-R loosely define a rational individual as an individual who:

But this allows any set of preferences; even ‘preference for apparent pain’. This is perhaps a ‘weak’ definition of rationality.

TODO: I intend to include a video here

Let’s call this rational individual ‘Ratchell’.

If…

Ratchell’s preferences can be represented by the utility function* \(u\) and

he is rational in the sense defined above (he chooses the ‘best feasible option’ according to his preferences) …

…then his ‘choice function’ will solve the ‘problem’

\[ max\{u(x): x \in A\}\].

We read this as “he chooses x to maximize the utility function \(u(x)\) under the constraint that \(x\) is in the ‘feasible’ set \(A\).” *

* Set \(A\): This may be the set of all alternatives Ratchell can afford to buy given his wealth, or the set of combination of goods and liesure time he can consume given his productivity and the prices he faces in the market, etc.

There is some debate about the meaning and interpretation of utility, particularly in the Behavioural Economics literature.

A defining feature of the standard Economics approach is that decisions are made as if all characteristics of outcomes can be compared and evaluated, thus we can reduce everything to a single dimension, ‘utility’, which is maximised.

Stepping away from O-R now, we may specify a “utility function” of two goods (or aggregations of goods) \(X\) and \(Y\).

This may be familiar from your undergraduate work. If so, you can skip this sub-section and go directly to ‘rationalising choice’

\[Utility = U(X,Y; other)\]

We can get a lot of insight from considering models of an individual’s preferences over only two goods. These two ‘goods’ could represent, for example:

Leisure and ‘goods consumption’

Food and non-food

Coffee and tea (holding all else constant)

Recall \(U(X,Y)\) expresses a function with two arguments, X and Y.

\(U(X,Y)\) must take some value for every positive value of X and Y.

In general a function of X and Y might increase or decrease in either X or Y, or increase over some ranges and decrease over other ranges of these two arguments.

For example, consider the function ‘altitude of land in Britain as a function of degrees longitude and latitude’.

\(U(X,Y)\) expresses a general function; I haven’t specified what this function is.

E.g., it could be \(U(X,Y)=\sqrt(XY)\).

Individuals’ utility functions may differ, of course.

We are not saying people actually consult a utility function or maximize a preference relation. That would be a dumb thing to say. We are considering that ‘people behave as if they are maximizing utility functions’; this is (basically) equivalent to saying “people choose based on preferences that satisfy the above assumptions.”

So, what do we really mean? We might consider whether people’s choices are ‘rationalizable’… whether their choices might be explainable by choosing according to a (transitive) preference relation (or maximizing a utility function.)

Defining ‘a rationalizable choice function’:

OR’s definition:

Note: this definition of rationalizability is distinct from the one I will present when considering game theory. I will give that one a different name.

A choice function is rationalizable if there is a preference relation such that for every choice problem the alternative specified by the choice function is the best alternative according to the preference relation.

Remember: a choice function assigns a choice to every set of alternatives. For example, suppose the following three alternatives are potentially under consideration… \(X = \{a,b,c\}\):

a: an apple and a carrot b: an apple (and no carrot) c: three apples, four carrots, and a spiderman costume

There are four possible ‘non-trivial’ choice sets among these alternatives (unfold):

A choice function must assign an individual’s choice to any of the above choice sets. For example it might assign

Could this (above) be ‘rationalised’ by any preferences? (Unfold)

No it can not! See O-R example 3.1 for a further discussion.

In fact, in observed data, people seem to often make choices with intransitivities.

There are other ‘choice rules’ that we might imagine people use that also cannot be rationalised by any preferences. For example (O-R 2.3), … suppose…

DR Todo: I hope to add a video here discussing this

From any set A of available alternatives, the individual chooses a median alternative.

This example relates to a proposed ‘middle-choice’ norm in behavioral economics. (Unfold…)

It is at least folk wisdom that customers tend to choose ‘middle choices’ (e.g., a ‘medium Coke’) among a set of alternatives. Note that this means that even unchosen ‘irrelevant alternatives’ may affect their behavior.

E.g., if offered “Set S”: {10ml, 50ml, 100ml} this person chooses 50ml.

But if offered “Set L” {50ml, 100ml, 200ml}, this person chooses 100ml.

But the 50ml and 100ml were available in both sets S and L. In this hypothetical example, the ‘irrelevant alternative’ caused their choice to change!As O-R show (Example 2.2., p 19), this choice function cannot be rationalised (unless we re-define the choices in terms of their position, see O-R discussion.)

Is this rationalizable? :

The waiter informs him … he may have either broiled salmon at 2.50 USD or steak at 4.00 USD. … he elects the salmon. Soon after the waiter returns from the kitchen … to tell him that fried snails and frog’s legs are [also available] for 4.50 USD each. … his response is “Splendid, I’ll change my order to steak”.

This comes from Luce (1957) (???), as cited by O-R.

O-R (Ex 2.3) explain why this seemingly perverse behavior could be rationalizable, because of the information conveyed.

O-R’s example ‘2.4 Partygoer’ is also interesting; I think the point here is that in a social setting these alternatives cannot be depicted simply as discrete options. The ‘offers made and accepted’ is part of the value of each alternative.

This translation from straightforward payoffs to payoffs that depend on the nature of the strategic interaction is explored in my paper ‘Losing Face’ (Gall and Reinstein 2020) (as well as in much previous work; I’m just self-promoting here).

Property alpha defined:

The above ‘violations’ and the discussion of ‘irrelevant alternatives’ relate to what O-R refer to as:

Property \(\alpha\) :

Given a set X, a choice function \(c\) for \(X\) satisfies property \(\alpha\) if …

for any sets \(A\) and \(B\) with \(B \subset A \subseteq X\) and \(c(A) \in B\) we have \(c(B) = c(A)\).

Explaining the above notation:

“a choice function \(c\) for \(X\)”: a function \(c\) that takes any of the choice sets made from the elements of \(X\) as an argument, and outputs the choice made.

“for any sets \(A\) and \(B\) with \(B \subset A \subseteq X\)”: For any choice sets where \(B\) is a ‘proper subset of \(A\)’… - “proper subset”… meaning that set \(A\) has all the elements that set \(B\) has, and \(A\) also has at least one more element.

For example, B: {fish, giraffe}, is a proper subset of A: {fish, giraffe, turnip}.

“\(A \subseteq X\)”: \(A\) is subset of \(X\)… it contains none, some or all of the elements in \(X\) (but \(A\) doesn’t contain any elements that are not in \(X\))

“… and \(c(A) \in B\):” Where the choice made when presented with set \(A\) is also part of choice set \(B\).

“… we have \(c(B) = c(A)\)”: the same element must then be chosen when presented with sets \(A\) or \(B\).

In other words: Property-\(\alpha\) requires that if you make a particular choice (say ‘fish’) from a larger menu (\(A\)) you must also choose ‘fish’ when you are given a reduced version of that menu.

Going in the other direction… Suppose I choose ‘fish’ from a small menu (\(B\)). Next I am presented a menu \(A\) that includes all of menu \(B\) plus (e.g.) some ‘dairy choices’. Property \(\alpha\) says that if I don’t choose any of the new ‘dairy choices’ on this larger menu, I must continue to choose the fish. The ‘irrelevant alternatives’ can not affect my choice.

O-R Proposition 2.1:

Every rationalizable choice function satisfies property \(\alpha\).

If (A) a choice function is rationalizable then (B) it must satisfy property \(\alpha\). \((A) \rightarrow (B)\).

Proof (sorry, a bit long-winded):

People may say they choose by “choosing the first option that meets a level that is ‘good enough’, and then I quit”. O-R formalise this in their section 2.4. This choice procedure is rationalizable (You can skim this, you don’t need to do the proof.)

This should not be confused with ‘search models’ and the ‘optimal stopping problem’. We are not considering search costs here.

One argument for why choices must reflect transitive preferences is that “behavior that is inconsistent with rationality could produce choices that harm the individual” (O-R, p. 23). Perhaps we should imagine that biological evolution would have ‘weeded out’ such individuals, or that their parents would teach them to do otherwise.

Consider:

If you found someone who strictly preferred a apple to a bananas, a banana to a cherry, and a cherry to an apple, you could make a lot of money out of them. How would you illustrate this?

This is easiest to illustrate if we allow these goods to be non-discrete and assume ‘more is always better’… this is a bit of a fudge, but it’s OK.

It works as follows:

Obtain (or borrow) an apple, a banana and a cherry.

Offer to give this person an apple in exchange for their banana plus a tiny little extra small unit of banana (or ‘money’).

Next offer them a cherry for their apple plus a bit extra.

Next offer a banana for their cherry plus a bit extra.

… Keep repeating steps 1-3, until you’ve drained all of their resources.

O-R present this slightly more formally (you can skip the formality if you like).

An interesting video discussing this

Becoming a money pump, Nick Chater, Warwick business school

An interesting discussion of ‘money pumps’ (and ‘Dutch books’) in terms of preferences and financial markets. I’ve seen this chocolate beverage argument presented for why people should not have ‘wide zones of indifference’.

O-R next present a case where a third ‘decoy’ option has a substantial affect on people’s choices, even though people rarely choose it!

The standard story: “A” is better than “B” in one dimension, but worse in another dimension; people are evenly split between A and B.

However, if a third alternative “C” is added to the choice set, and C is ‘worse in all ways than B’ (but still better than A in some ways). Now people tend to choose B.

This ‘decoy effect’ (aka, “asymmetrically dominated choice”, aka “the attraction effect” ) is widely discussed in behavioral economics and marketing.

Dan Ariely gives a presentation on this below; see also this article in ‘The Conversation’.

O-R next discuss ‘framing effects’ in the context of roulette wheel choices; people make distinct choices in choice problems that are identical, other than the way that they are described.

The ‘decoy effect’ is (arguably) not a framing effect, because the choice set is actually changing.

We will come back to framing effects when we consider decisions about uncertainty, and the idea of ‘loss aversion’.

Clearly these cannot reflect rationalizable preferences unless we make the description part of the preference itself.

Blackorby, Charles, Walter Bossert, and David Donaldson. 1995. “Intertemporal Population Ethics: Critical-Level Utilitarian Principles.” Econometrica: Journal of the Econometric Society, 1303–20.

Gall, Thomas, and David Reinstein. 2020. “Losing Face.” Oxford Economic Papers 72 (1): 164–90.